By the end of January 2026, the new social media platform UpScrolled became the most downloaded free app on Apple’s App Store. After accruing approximately 150,000 users in the first six months of operations, UpScrolled suddenly surged to over 2.5 million users. This platform migration largely occurred at the expense of TikTok. Many users have seemingly expressed disaffection at Oracle founder Larry Ellison’s acquisition of a majority stake in TikTok’s US operations, alongside alleged censorship and the shadow-banning of users critical of Israel’s operations in Gaza and the conduct of US Immigration and Customs Enforcement (ICE). While TikTok has stated that no censorship transpired with regard to ICE — any problems experienced by users were said to be the outcome of a week-long technical outage — the beneficiary has nevertheless been UpScrolled. Backed by the Tech for Palestine Incubator and founded by Palestinian-Australian app developer Issam Hijazi, UpScrolled prides itself on being a bastion of free speech, particularly as it pertains to Palestine.

Among this upsurge in users, however, there have been many voices espousing violent extremist discourse. Indeed, while swathes of disaffected users have abandoned TikTok, a number of extreme-right wing accounts have expressed their own grievances towards other platforms, especially X, as the reason for joining UpScrolled. As one accelerationist pithily phrased it in their bio: “X Sucks”. Another account expressing adoration for Hitler branded themselves a “Refugee from jewish X [sic]”, while a third voiced their hope that UpScrolled would be “less gay and pozzed than Twitter”. Others drew on antisemitic claims justifying their platform migration. As one user pondered: “Curious if others have recently gotten suspended on X. Think they intend to shut it down because of the Epstein emails?” Appended to their post was a caricatured image of a Jewish man pressing a button entitled “SHUT IT DOWN”. In a similar vein, many others have opted to derisively refer to Elon Musk as “Jewlon”.

Against this backdrop, this Insight explores the recent upsurge in traffic garnered by UpScrolled, focusing specifically on the upload of right-wing accelerationist content. The sheer extent and extremity of material highlighted below reveals severe deficiencies in the platform’s approach to content moderation, hosting not only “awful but lawful” content, but material which arguably veers towards outright glorification of violent extremism and proscribed terrorist organisations.

An Emerging Haven for Extreme-Right Wing Content

A keyword search was undertaken on UpScrolled that drew on a dictionary of extreme right-wing terminology, coupled with a snowballing approach across accounts that followed one another. This open-source approach surfaced no shortage of extremist material that could be classified, depending on jurisdiction, as awful but lawful. This included a litany of far-right antisemitic material, from Holocaust denialist memes — numerous of which were generated with artificial intelligence (AI) — to conspiratorial content about supposed Jewish machinations. Moreover, whether imagery of Hitler, British Union of Fascists leader Oswald Mosley, American neo-Nazi George Lincoln Rockwell, or the rank-and-file of the Ku Klux Klan, other white supremacists have shared historical content carrying an unmistakable celebratory or reverential subtext. Many more regurgitated staple iconography, including the SS-Totenkopf, swastikas, or slogans, such as the oft-cited 14 words (“We must secure the existence of our people and a future for white children”).

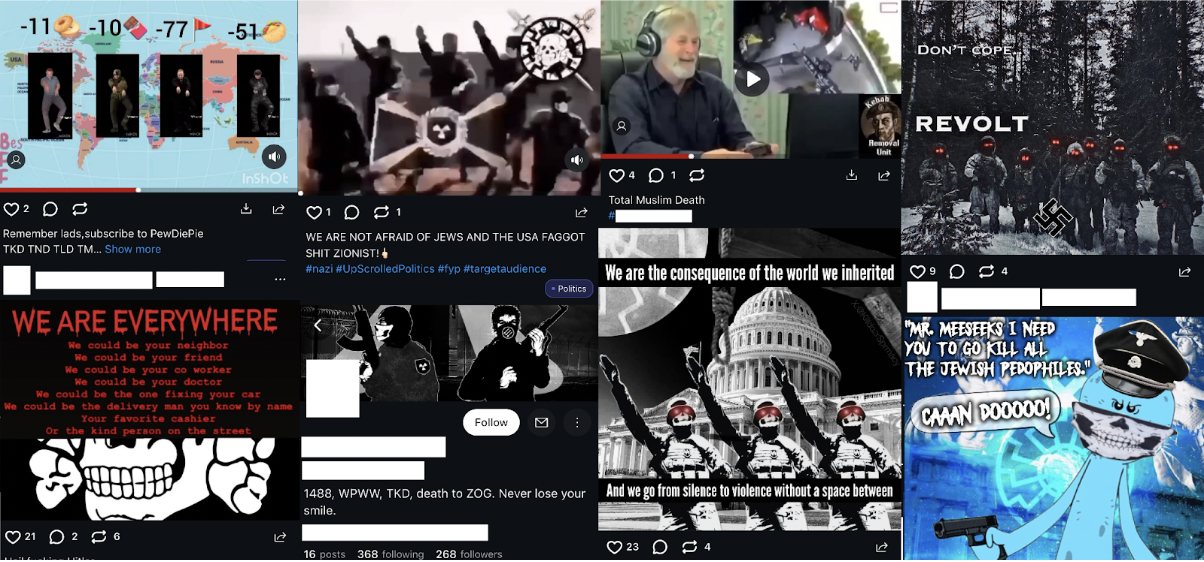

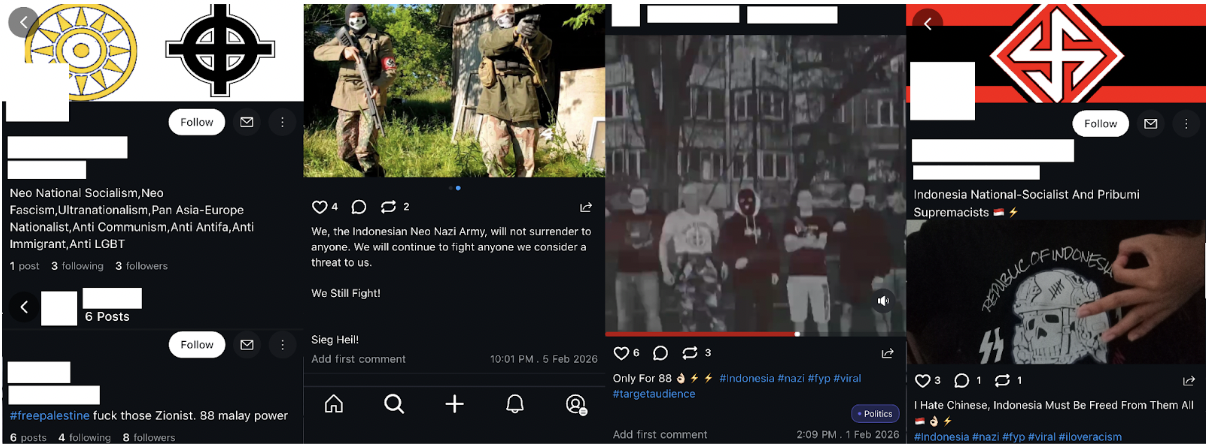

Of particular concern, some users veered towards outright glorification of violent extremism and proscribed organisations (Fig. 1). This was especially common among those espousing accelerationist sentiments; that is, a desire to hasten society’s collapse through violence in order to bring about a white ethnostate. For instance, in the bio of one user, they self-described as an “Ethnic cleansing accelerationist”. In an ominous if implicit call to revolutionary action, one gun-laden clip branded viewers as part of the “new founding fathers”. Some shared positive references to the extremist slogan RWDS (Right Wing Death Squad) and a looming “Boogaloo” (i.e., second American civil war); others outright called for the murder of Jews. One video glorified neo-Nazi James Mason (a designated terrorist under Canadian law), while several accounts shared images lauding Atomwaffen Division, a proscribed terrorist organisation in Australia, Canada, and the UK. Curiously, a few users ostensibly belonging to neo-Nazis from Indonesia and Malaysia were identified, reflecting a growing phenomenon centred on the transnational spread of neo-Nazi ideas and aesthetics to Southeast Asia (Fig. 2).

Perhaps most alarming are those accounts actively lauding past terrorists. For example, one particularly alarming accelerationist account posted a montage celebrating the Norwegian terrorist Anders Breivik, shared cartoonish memes of the Christ Church terrorist Brenton Tarrant, and uploaded memeified footage of this massacre. Furthermore, uncensored first-person footage and edits glorifying the Christ Church, Buffalo, and Halle attacks were also found strewn across several accounts. The number of individuals posting these graphic clips was comparatively small among the several dozen extreme-right wing accounts studied, and at least some have disappeared, likely due to moderation efforts. Nevertheless, the presence of such blatant TVE content remains highly alarming.

An Insufficient Stop-Gap Measure

It is worth noting that numerous violent extremist clips appeared on-screen as a blurred still with the following warning superimposed: “Sensitive Content: May contain graphic or disturbing material”. While this disclaimer offers a useful warning for those users who would prefer to scroll past questionable content, one could alternatively ignore the message by simply clicking on the warning and watching the unredacted video in full. As such, content moderation measures short of takedowns appear to be applied in ways that contradict UpScrolled’s stated policies or reflect inconsistencies in identifying the severity of harmful content.

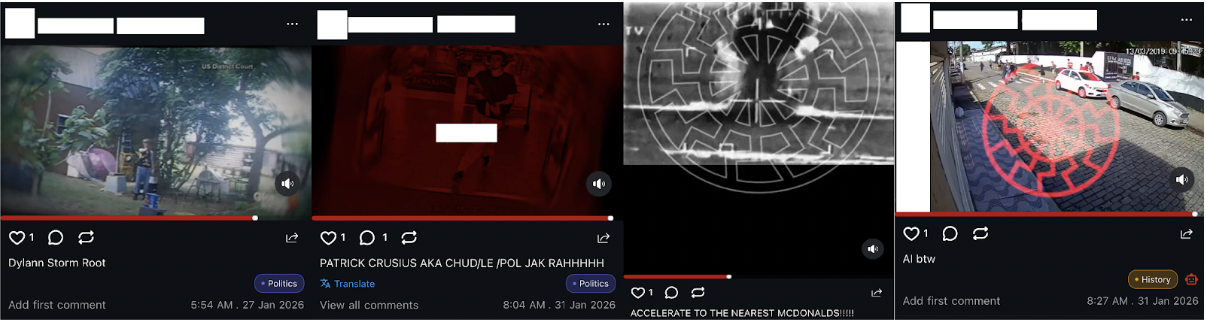

The posts by one ostensibly accelerationist user are illustrative. The account in question contained repeated uploads to which “Sensitive Content” warnings were appended. This included imagery glorifying Breivik, a perverse AI-generated clip of the Virginia Tech shooter dancing, footage from the 2022 Buffalo attack, and even a video compilation of multiple murders captioned with the phrase: “SINISTER 09A AI LARP” (i.e., Order of Nine Angles AI live-action role play) (Fig. 3). As in the latter case, the caption presents a nominal façade likely intended as a half-hearted way to fool content moderators, presenting the video as perhaps a perverse joke: an AI-generated replica of Order of Nine Angles content — the latter being “a decentralized, satanic, neo-Nazi organization” — rather than the real thing. Cross-referencing the timestamps on the CCTV footage within the video expectedly confirmed that the montage comprises real clips from a range of school and other mass shootings. The same user uploaded multiple other videos using the same lazy, yet strangely effective, AI façade. In one clip depicting footage of the Suzano school massacre superimposed with neo-fascist iconography, they merely appended the words “AI btw [by the way]”.

That these uploads received content warnings rather than being taken down is itself concerning. Moreover, why other clips posted by this user — including edits of individuals often deified in accelerationist and nihilistic extremist communities, such as Dylann Roof and Patrick Crusius — were neither removed nor received a similar content warning only raises further questions.

Implications

It is unclear whether the popularity of UpScrolled amongst disaffected TikTok users will last, or if the platform’s popularity will suffer a precipitous plateau. It would also be premature to conclude whether extremist factions have merely found a temporary home, or a more lasting alt-platform on which to reside. In any case, it is apparent that UpScrolled has emerged as a hospitable, if nascent, space for TVE content, alongside a wider raft of virulently conspiratorial, antisemitic discourse. Attracting these latter voices is perhaps not overly surprising, given the emphasis UpScrolled has placed on providing a platform for anti-Zionist voices. While certainly not all debate transpiring on UpScrolled regarding Israel and Palestine is beyond the pale, there is nevertheless a sizable extremist milieu whose output thoroughly exceeds the reasonable boundaries of civic debate and valid critique of Israeli policy.

Against the backdrop of the foregoing discussion, two observations are worth flagging. Firstly, given that the platform hosts blatant and readily recognisable TVE content, including from high-profile attacks, UpScrolled would benefit from immediate steps to take down and curtail such uploads. With the platform’s growing user base, this is important from a P/CVE and online safety angle. Yet so too should it be for UpScrolled from a pure liability and regulatory perspective: a laissez-faire approach to free speech is one matter, but this does not grant the right to host content celebrating violent extremists. UpScrolled is an Australian company, and it acknowledges that it must, when required by Australian law, disclose user data. Content found on the platform celebrated organisations ranging from Hamas and Hezbollah, through to ISIS and Atomwaffen Division (also known as the National Socialist Order). All such groups are banned terrorist organisations under Australian legislation. Accordingly, inadequate moderation may open UpScrolled to increased scrutiny by the eSafety Commissioner, Australia’s independent regulatory agency responsible for online safety, alongside its international counterparts, including the UK’s Ofcom.

Crucially, the platform’s current Rules and Policies explicitly prohibit “[t]hreats, glorification of harm, or support for terrorist/violent groups”. To uphold this minimum standard, UpScrolled must rapidly bolster its resources dedicated to Trust and Safety and ensure that implementation meets its stated policies. Such progress will be essential in light of the platform’s increased traffic, which has certainly led to a rise in the scale of content and ostensibly overwhelmed its moderators. Indeed, it has been reported that, until recently, UpScrolled moved from a single moderator to more than 20.

Further growth in its team is urgently required, but so too are improvements in its approach. This includes moving away from appending “Sensitive Content” warnings when content condones or venerates violent extremism to instead take down such material. Moreover, methods must be supplemented by automating and streamlining the takedown of flagrantly terrorist content. A recent post by UpScrolled’s official account acknowledged the influx of violative content and called on users to “[k]eep reporting what you see”. While details about the moderation methods and tools used by UpScrolled remain unclear, suffice it to note that reliance on user reporting is a highly reactive approach and can only be a partial solution. One suggestion in this respect is to engage with Tech Against Terrorism’s Terrorist Content Analytics Platform, which may offer a starting place to streamline takedowns of flagrantly terrorist content and mitigate this emerging challenge moving forward.

Secondly, given the recent attention UpScrolled has gained, further research and monitoring of the platform are warranted by those engaged in preventing and countering violent extremism. This is essential not only to gather whether progress is made in curtailing TVE uploads, but also to trace broader trends in extremist communities as they migrate from one platform to another. At present, the use and abuse of UpScrolled by such individuals is still in a nascent, experimental phase. After all, newly created white supremacist accounts have actively sought to test the platform’s commitment to a maximalist conception of free speech that would permit unbridled hate. One user, for example, engaged in what they referred to as “shitpost pentesting”; this amounted to merely posting egregious statements — such as “Kill all Palestinians” or “The ‘holocaust’ is a hoax” — and gauging whether their account suffered any consequences. Whether these actors continue to flock to the platform, or migrate elsewhere, will depend largely on UpScrolled’s response and, thus, whether it passes or fails the supposed “free speech” test from the perspective of such extremists.

—

Kye Allen holds a doctorate in International Relations from the University of Oxford and is a research associate at pattrn.ai (Pattrn Analytics & Intelligence). This article was written in his personal capacity. His primary research interests focus on far-right extremism, both historical and contemporary, and the intersection between technology, political violence, and extremism.

—

Are you a tech company interested in strengthening your capacity to counter terrorist and violent extremist activity online? Apply for GIFCT membership to join over 30 other tech platforms working together to prevent terrorists and violent extremists from exploiting online platforms by leveraging technology, expertise, and cross-sector partnerships.