This Insight examines how extremist aesthetics and narratives inspired by jihadist groups are produced, circulated, and normalised within digital communities hosted on Discord. This platform is primarily designed as a communication space for gaming and social interaction. However, its structural features (including semi-private servers, flexible communication tools, and low barriers to entry) have increasingly positioned it as a space in which ideological content can be embedded in everyday online practices. This analysis focuses on how these environments facilitate the formation of closed communities that merge play, identity, and ideology, creating conditions conducive to early-stage radicalisation processes.

The study pays particular attention to communities in which minors are present, highlighting how extremist content is introduced and sustained among younger users. Rather than overt operational planning, the observed dynamics align with early phases of online radicalisation, where extremist narratives are framed as cultural, aesthetic, or playful elements rather than explicit calls to violence. The Insight explores three interrelated dimensions: the role of Discord’s moderation and governance framework, the emergence of echo chambers in these communities, and the creation of a collective identity that binds the group.

Methodologically, this research is based on qualitative discourse analysis of approximately 700 messages collected from publicly accessible Discord servers linked to jihadist-inspired communities, of which around 200 contained explicit extremist or jihadist content. The dataset also includes a wide range of visual materials, such as images, graphic edits, and videos, some of which reproduce recognised jihadist aesthetics or disseminate official propaganda. It is essential to acknowledge that a significant share of the visual material is user-generated and adapts extremist ideologies to Gen-Z digital culture. The analysis focuses on three case studies selected due to their recurring visibility across platforms and their role as reference points within these online ecosystems.

All data were collected through open-source research, relying exclusively on publicly available servers whose invitation links circulated primarily on social media platforms, particularly TikTok (Figure 1). The observation period spans from September to December 2025, with some servers being voluntarily deleted by their members in October 2025, while others remained active at the time of analysis. Notably, there was limited evidence of systematic content removal by Discord itself. In most cases, content disappearance resulted from user-initiated deletion to avoid moderation, rather than visible enforcement actions by Discord.

Figure 1. Screenshot of a jihadist-related Discord server being promoted on TikTok.

By examining how extremist content adapts to platform functionalities and Gen-Z digital culture, this Insight aims to bridge the gap between formal moderation frameworks and their practical effectiveness in dynamic online environments. The analysis highlights how existing governance measures often struggle to keep pace with rapidly evolving forms of digital socialisation and extremist adaptation. By documenting these discrepancies, the Insight lays the empirical groundwork for identifying areas where platform responses, particularly in contexts involving minors and semi-private communities, require further refinement.

Discord’s Moderation Framework

Discord maintains a zero-tolerance policy towards violent extremism, prohibiting the organisation, promotion, or support of extremist activities. These rules are articulated in its Community Guidelines and expanded through a specific violent extremism policy, which also enables the platform to take action based on credible off-platform behaviour when there is a clear risk of physical harm.

According to Discord’s 2024 H1 Transparency Report, the platform took action against 17,567 distinct accounts for violent extremism, disabling 16,309 accounts and removing 2,607 servers during this period. Although reports related to violent extremism represented only 0.38% of total user reports, the rate of account and server removals indicates that this category is treated as a priority area for enforcement. Moderation combines human review by Trust & Safety teams with automated systems and server-level tools, alongside cooperation with external partners and law enforcement when necessary.

In February 2026, Discord announced the global rollout of a “Teen Default Experience”, introducing age-assurance mechanisms and stricter default safety settings for users under 18. These measures restrict access to age-gated spaces, apply content filtering by default, and limit certain interaction settings unless a user is verified as an adult, reinforcing the platform’s preventative approach to harmful and illicit content, particularly for minors. Furthermore, the platform’s Transparency Report for the EU Terrorist Content Online Regulation (TCO), published on 1 March 2026, enumerated the specific proactive efforts made to remove TVE content. These include machine learning models trained to identify potential violations and bad actors, image hashing technology, and keyword monitoring that flag content for human review (pp. 3-4).

However, empirical observation suggests that users engaged in these potential extremist groups actively adapt their behaviour to evade moderation. A common strategy is the use of voice channels, perceived as less susceptible to automated detection. In text channels, users frequently employ linguistic deception (for example, I$I$) by using numbers or symbols to bypass keyword filters while remaining intelligible to insiders.

Similar tactics appear in shared media, where videos or images are posted behind spoiler or content-warning tags to reduce immediate scrutiny (Figure 2). Additionally, some communities periodically delete messages or channel histories, thereby limiting the accumulation of reportable content (Figure 3). However, while these strategies may generate a short-term reduction in visibility, they do not necessarily ensure sustained impunity. Pattern-based detection systems can identify behavioural signals that extend beyond isolated keywords or individual posts, meaning that such tactics often function as temporary friction rather than structural shields against enforcement.

Figure 2: Screenshot showing a user sharing an edit of an official jihadist propaganda video using the spoiler tag to supposedly avoid detection.

Figure 3: Screenshot of a conversation in which a user offers to ‘clean’ the server to evade moderation.

Taken together, these dynamics suggest that although Discord has developed a robust formal moderation framework, moderation pressure tends to reshape behaviour rather than eliminate it entirely. Even in a predominantly proactive system, enforcement often operates in a cyclical pattern: as detection improves, actors adapt. In this sense, arguably, moderation does not necessarily eradicate extremist expression but necessitates its migration into less visible, more socially embedded forms that can further complicate detection and long-term disruption.

Echo Chambers

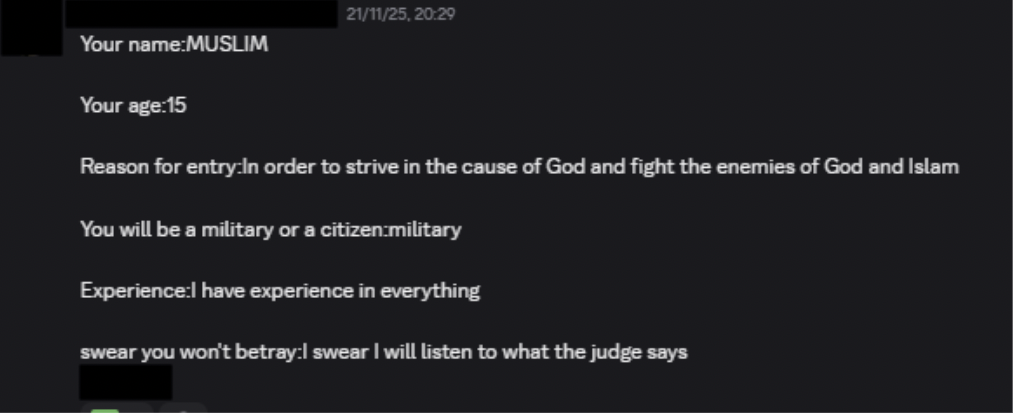

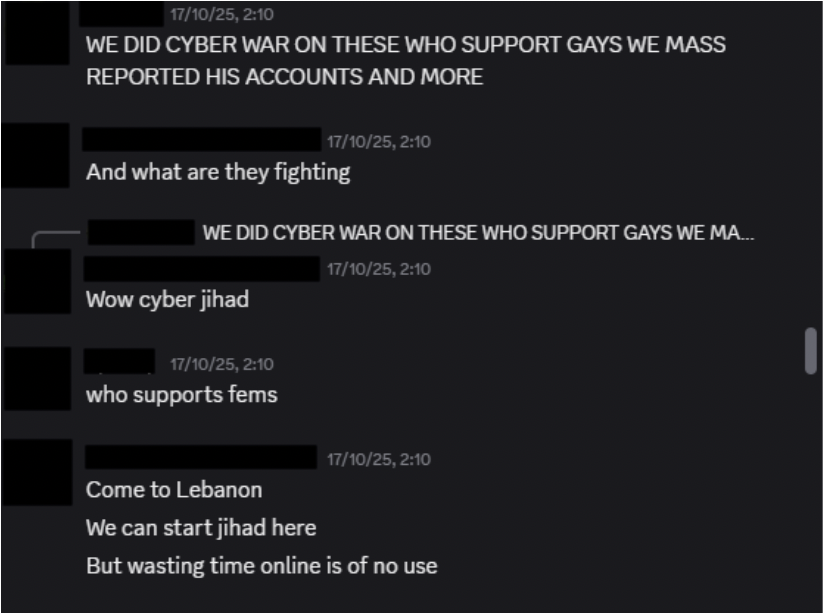

The analysed Discord servers function as effective echo chambers in which users are repeatedly exposed to homogeneous extremist narratives that are reinforced through continuous interaction and limited exposure to alternative viewpoints. Entry into these spaces typically occurs via TikTok or similar mirror platforms, where links to servers circulate widely (Figure 4). Once inside, users can become embedded in extremist dynamics until they are banned or the server is removed. However, this rarely disrupts the process, as users quickly return by creating new accounts or establishing new servers with the same ideological focus. This cyclical pattern has enabled the empirical identification of a recurring presence of minors, despite ongoing moderation efforts (Figures 5 and 6).

Figure 4: Screenshot showing how Discord servers are shared on other social networks, in this case, TikTok.

Figure 5: Screenshot of a user’s message explaining the reasons for joining a gaming Discord server where jihadist behaviour is present, and his age (15 years old).

Figure 6: Screenshot of an everyday interaction between minors in a jihadist-related community.

This self-reinforcing structure aligns with Fathali M. Moghaddam’s Staircase to Terrorism model, particularly its early stages. Observations indicate that users remain largely within the three lower steps of the staircase, with no evidence of operational planning but clear signs of early-stage online radicalisation. At the first step, feelings of grievance and perceived injustice are validated within the group; at the second, these emotions gradually evolve into frustration and anger toward a clearly defined “enemy”; and at the third, violence increasingly becomes normalised and framed as a legitimate or justified response. Acting as echo chambers, these servers accelerate this progression. Here, the most radicalised members at the highest steps assume the role of mentors for new recruits, perpetuating a cycle that intensifies shared emotional and ideological frames.

Figure 7: Screenshot of a conversation between several users where early radicalisation can be perceived.

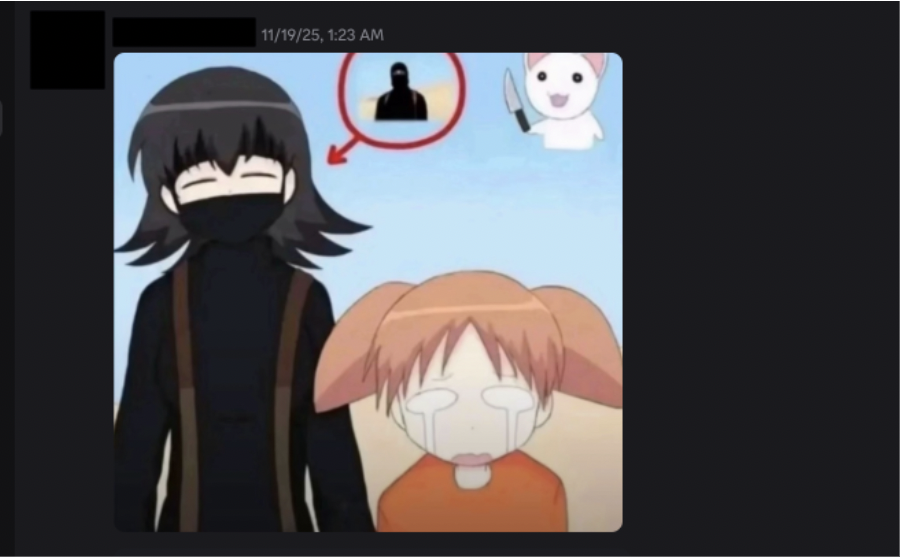

Within this environment, Discord also serves as a space for the circulation and normalisation of extremist propaganda. While official materials produced by jihadist organisations are present, a significant portion of the content is generated internally by members who assume the role of editors and creators. Through memes, graphic edits, and visual montages, users actively participate in producing and sharing material that reinforces dominant narratives. Analogously, this exchange is reminiscent of the school playground, where trading cards are shown, exchanged, and valued among peers, reinforcing community dynamics based on content created by and for digital natives, using visual codes, humour, and Gen-Z language.

Figure 8: Screenshot of a conversation in which a jihadist nasheed is distributed, and questions are asked about where to obtain content of this nature.

Figure 9: Screenshot of a conversation in which one user shows another where to obtain jihadist propaganda.

Figure 10: Screenshot of a jihadist meme shared on a Discord channel.

The convergence of these factors contributes to the consolidation of a highly homogeneous environment. Beyond amplifying early radicalisation, this dynamic lays the groundwork for strong social bonds and internal cohesion, transforming the echo chamber into a space of mutual recognition and a sense of belonging.

Collective Identity Formation

Digital communication environments linked to gaming have become central spaces for contemporary socialisation and identity formation. In particular, Discord has emerged as a key hub where leisure, everyday interaction, and at times, as seen in our analysis, extremist narratives intersect. Discord’s technical features allow these communities to organise themselves around assignable roles that generate visible hierarchies and sustain recurring weekly events. Active participation, compliance with internal rules, and performance during group activities are rewarded through symbolic recognition from higher-ranking members within the community hierarchy. Over time, this system encourages the internalisation of norms and reinforces group discipline, often making social belonging more vital than explicit ideological commitment.

Communication within these servers is typically informal and highly interactive, reinforced by frequent use of voice chat. Within this environment, jihadist terminology such as kuffar or jihad may appear alongside non-religious content, integrated into everyday language, jokes, or in-game narratives. When detached from their original context, these terms become normalised within the group’s shared vocabulary, lowering moral and cognitive barriers to extremist references.

Team-based gameplay further strengthens cohesion. Many activities require high levels of coordination, and the absence or poor performance by one member can negatively affect the group, increasing the pressure to remain active and compliant. The resulting sense of collective belonging, within overwhelmingly male-dominated communities, acts as a powerful driver of engagement. Extremist narratives are often perceived as aesthetic or secondary to the social experience. Under these conditions, radicalisation unfolds gradually, masked by familiarity, humour, and group loyalty. Leaving such communities, therefore, entails not only ideological disengagement but also the loss of social ties and recognition, reinforcing the resilience and cohesion of these digital environments.

Beyond individual engagement, these dynamics contribute to the long-term resilience of the communities themselves. When servers are removed or voluntarily deleted, social ties and shared practices are rarely disrupted. Instead, they are rapidly reconstituted through new servers, alternative accounts, or platform migration. This continuity is sustained by pre-existing networks, shared symbols, and a collective identity, allowing communities to persist despite episodic moderation. As a result, radicalisation is not confined to a single digital location but unfolds across a fluid ecosystem in which social belonging, rather than platform permanence, becomes the primary stabilising factor.

Conclusions and Recommendations

This Insight highlights the significance of proactive moderation strategies in fast-evolving, youth-oriented digital environments. While Discord demonstrates a clear institutional commitment to countering violent extremist content on its platform – as reflected in its comprehensive policies, transparency reports, and dedicated safety systems – empirical observation reveals that moderation systems must continuously evolve to anticipate and mitigate emerging risks. In this regard, Discord’s existing governance framework provides a strong foundation for disrupting harmful behaviours and presents important opportunities to further strengthen prevention efforts.

Thus, the findings underscore the importance of intervening before radicalisation advances beyond its initial stages. The observed communities largely remain within the lower levels of radicalisation, where extremist narratives are normalised through aesthetics, humour, and everyday interaction rather than explicit operational intent. However, although the most robust early-detection mechanisms, including automated tools capable of identifying evolving keywords, coded language, and visual cues that reflect the ever-changing nature of Internet subcultures, may be useful for preventing progression along this ladder, these measures currently have limitations that only human intervention can overcome. Therefore, it would be worth continuing to strengthen the human approach to moderation, taking into account the context and culture in which these communities are sustained. Based on Discord’s TCO Report, it appears that these measures are already underway.

Moreover, the presence of minors represents a particularly critical area requiring sustained proactive safeguards. Discord’s new teen-by-default settings represent a significant advancement in embedding age-appropriate protections directly into the platform’s architecture, as they provide a strong foundation for further strengthening early-stage prevention and reducing the risk of sustained exposure to extremist content among younger users. At the same time, the cyclical movement of users across platforms, especially between TikTok and Discord, highlights the broader ecosystem-level challenge of ensuring consistent protection across digital environments.

Taken together, these findings suggest that countering online radicalisation in semi-private, youth-populated spaces requires more than policy enforcement alone. It demands adaptive, coordinated, and preventive approaches that address not only content but also the social dynamics and identity-building processes, such as internet subcultures, through which extremist narratives are normalised and sustained.

–

Miguel Gómez is a Master’s degree graduate in Geopolitics and Strategic Studies at Universidad Carlos III de Madrid and an early-career researcher. He has previously worked as an intelligence analyst and holds a Double Bachelor in International Relations and Law. His work focuses on geopolitics, terrorism, and hybrid threats, conducting intelligence and geopolitical analysis on international conflicts, terrorist dynamics, and extremist networks across multiple regions.

María Remiro is a Master’s Degree in Geopolitics and Strategic Studies graduate at Universidad Carlos III de Madrid and an early-career researcher. She has previously worked as an intelligence analyst and holds a Bachelor Degree in International Relations. Her work includes extremist narratives, online propaganda, and the impact of geopolitical dynamics on the evolution of violent extremist movements, with a particular focus on the Sahel region.

–

Are you a tech company interested in strengthening your capacity to counter terrorist and violent extremist activity online? Apply for GIFCT membership to join over 30 other tech platforms working together to prevent terrorists and violent extremists from exploiting online platforms by leveraging technology, expertise, and cross-sector partnerships.