New and unique online platforms are seemingly constantly cropping up, often ripe for extremist exploitation even if moderation policies are in place. One platform, Viggle AI, launched in March 2024 and already boasts over 40 million users, with a rapid rise among both legitimate and malicious users. While monitoring three different online extremist ecosystems – extreme right, True Crime Community (TCC), and Salafi-jihadi – there was recurrent and common use of Viggle, a generative AI platform, to spread propaganda. Across ecosystems, Viggle features have been exploited to produce videos of famous attackers dancing, while highlighting the number of victims killed in attacks perpetrated by them. Although the three detected ecosystems differ in their ideologies, they use Viggle AI for the same purpose: creating appealing, catchy content that glorifies violence and death. The latter content has been observed to be disseminated both through the Viggle AI app and on social media platforms, particularly those popular with young users, such as TikTok.

Therefore, this Insight will focus on how generative AI tools such as Viggle AI can be used to create extremist propaganda appealing to youth, focused on the glorification of violence by the three targeted extremist online ecosystems. In particular, the Insight will focus on the analysis of Viggle AI-related audiovisual content depicting popular attackers and figures associated with the extreme right, the True Crime Community (TCC), and the Salafi-Jihadi ecosystem.

What is Viggle AI?

Viggle AI is a free image-to-video AI generator which allows users to turn any image into a video. It can be used with motion templates or with original videos that serve as motion references. The AI offers several options for creating videos. Among them is an AI Dance Generator, which can animate an image by uploading it to the app and selecting an available dance template, or by uploading a new reference clip.

The Viggle AI app has a feed that is divided into three sections: “Trending”, “Explore” and “Followed”, which, alongside a search bar, allows the user to freely explore all the content created through the AI. Through the app, users can also consult other users’ profiles and view statistics such as “Mix”, “Likes”, “Followers”, and “Following”.

Content produced in the app retains the Viggle AI watermark when the free version is used and can then be downloaded, updated, or shared directly on other platforms, such as Instagram or TikTok. Both platforms are mentioned on a blog post on the Viggle AI website, which highlights how “Viggle AI has become a significant driver of trends on TikTok” due to its features.

Viggle AI and the Extreme Right Online Ecosystem

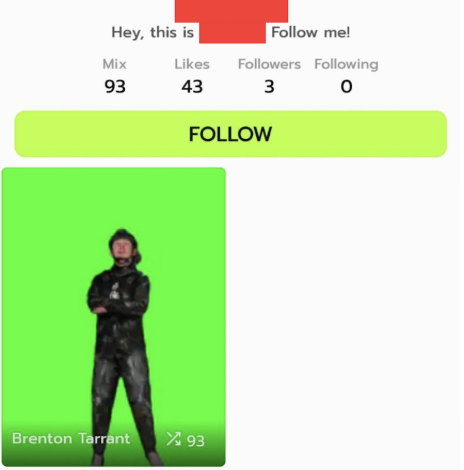

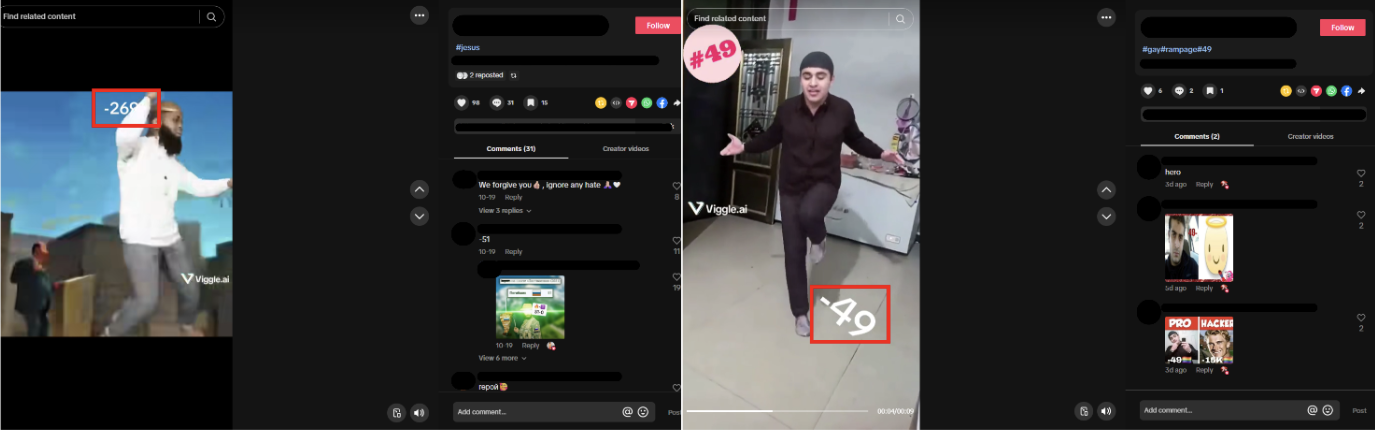

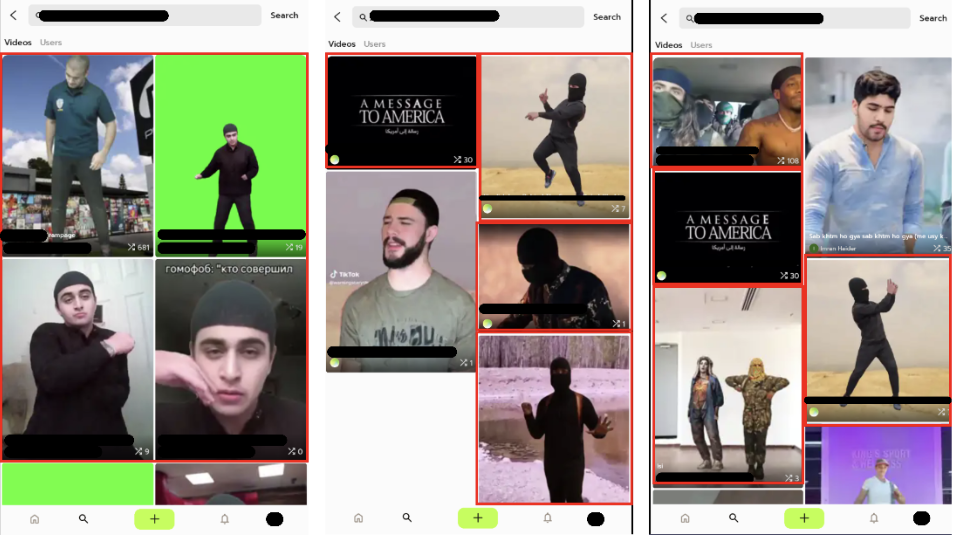

In the Viggle AI app, content related to famous extreme-right shooters such as Brenton Tarrant, Anders Breivik and Payton Gendron was readily identified. Through the search bar, it was possible to find both videos and users that included or referred to these figures (Figure 1). Most of the videos found depicted the shooters dancing and were used on TikTok as part of the ‘Rampage dance’ trend.

The vast majority of the content found was dedicated to Tarrant, who maintains significant relevance in the far-right extremist online ecosystems due to the livestream of its attack, which later inspired other attackers as well.

Typically, the shooters are depicted dancing in locations readily associated with their attacks: Tarrant is frequently shown near the Al Noor Mosque, while Gendron is usually shown near the Tops Friendly Market. One more common element is often some reference to their victims, which usually is the number of people killed alongside an emoji to indicate their religious affiliation: in the case of Tarrant, on screen will also usually appear “-51” followed by the emoji” ☪️”, explicating both the number of victims of its attack and their religion in an Islamophobic glorification (Figure 2).

Figure 1: An example of profile sharing a Brenton Tarrant video edit on the Viggle AI app.

Figure 2: A video of Brenton Tarrant dancing in front of the Al Noor Mosque. Next to him, the number of his victims alongside the half-moon that refers to Islam.

These videos explicitly glorify the violence of the attacks while omitting their ideological context, with comment sections subsequently filling the gap by directing viewers to information about the attackers.

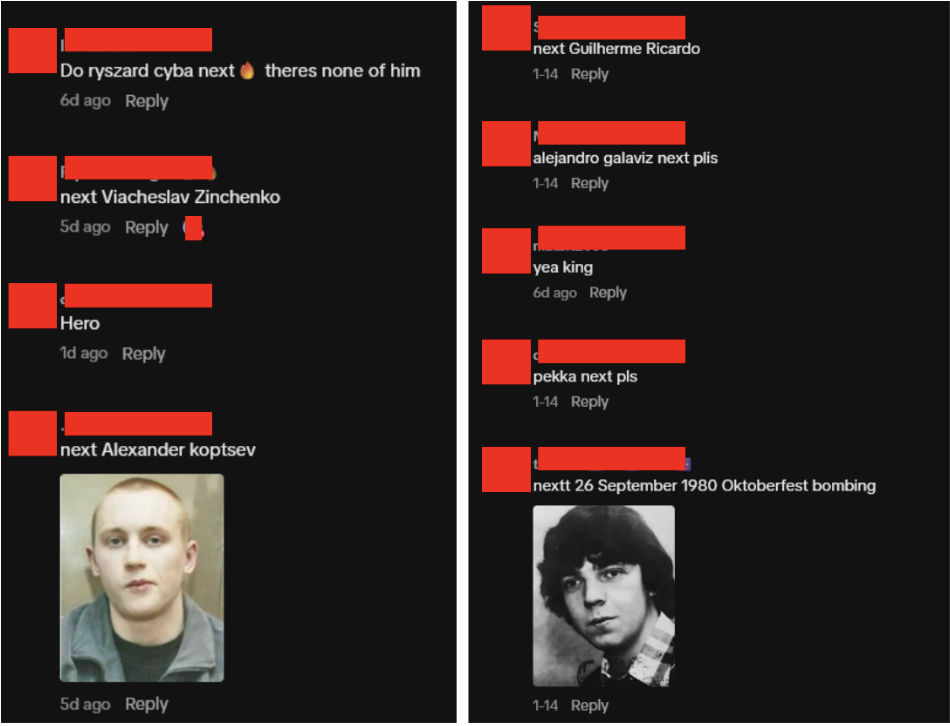

Figure 3: Example of users on TikTok requesting more information regarding an attack through the comments of a video created with Viggle AI.

Viggle AI and the True Crime Community (TCC) Online Ecosystem

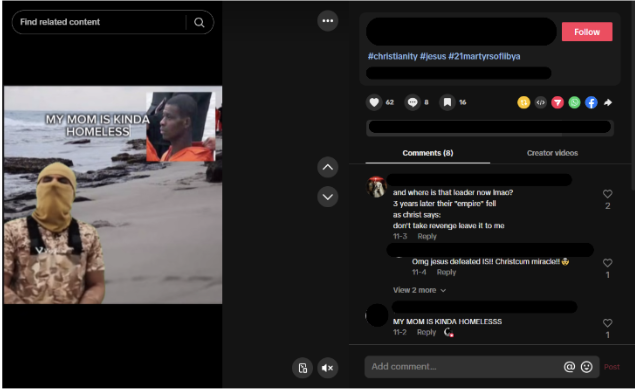

The authors have also identified the mass utilisation of Viggle AI by the True Crime Community (TCC). Among this peculiar ecosystem, some people develop a strong fascination and obsession with killers, their lives and their crimes, and create materials to celebrate murderers. While there is often no ideology shared, there is a glorification of violence and its promotion. This latter process can be facilitated and accelerated by means of exploiting AI tools. Specifically, some members of the TCC online ecosystem are using Viggle AI to create videos featuring serial killers and school shooters dancing in front of the locations of their attacks. These videos also display the number of victims associated with each attack. Within this community, content related to violence is drained of its negative meaning and depicted as joyful and memorable. Moreover, based on the comment sections within the aforementioned social network platforms, some videos are even produced on request, as shown in Figure 4.

Figure 4: An example of a request made by users to create a video with a specific murderer.

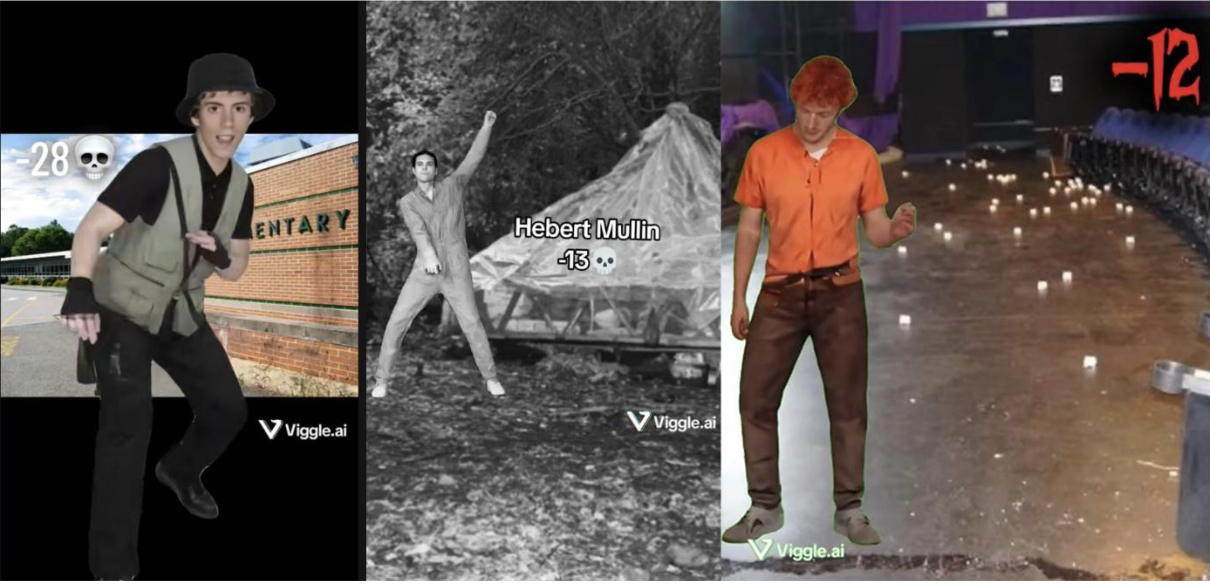

The most commonly displayed subjects are school shooters, likely due to the ease with which young users can identify them, followed by other forms of multiple-homicide offenders. Content related to the perpetrators of the Columbine High School attack, the Sandy Hook Elementary School attack, and the Abundant Life Christian School attack was accessible online, in addition to that of several American serial killers (Figure 5).

Figure 5: Example of videos shared, generated with Viggle AI, where school shooters and killers are dancing.

Undoubtedly, these videos do not explicitly incite murder but rather lead to the normalisation of violence. This content creation, as noted by Ondrak and Vitelli, may lead to “the valorisation of the act of these attacks, reducing violence to aesthetic action”, celebrating the murderers. Therefore, the idea is that sharing such content does not promote a specific ideology, but rather violence as entertainment.

Viggle AI and the Salafi-Jihadi Online Ecosystem

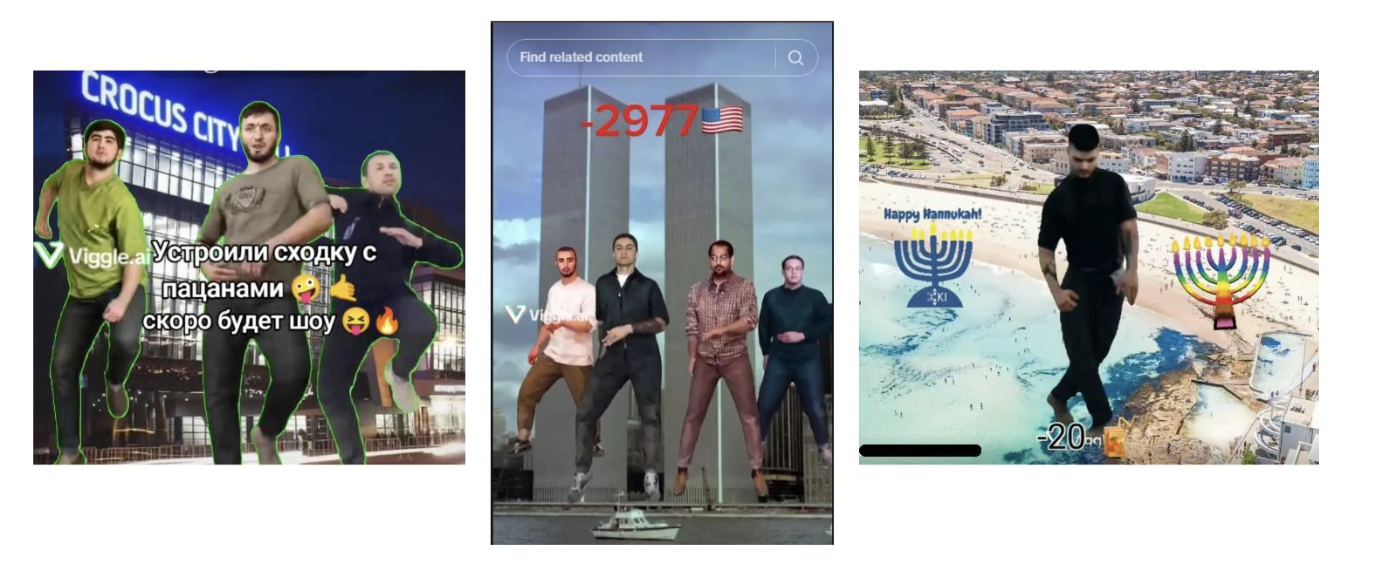

Viggle AI-generated videos are widely spread in the pro-Salafi-jihadi online ecosystem as well. These videos, like the others described, primarily glorify famous terrorist attacks. Typically, the main characters of such videos are media-famous mujahedin – such as Jihadi John and/or the so-called ‘French Barber’ – and other notorious terrorist attackers. These figures are usually portrayed adopting violent attitudes while often doing the viral dance trend known as ‘rampage’. This trend is a viral short-form street-dance combo that blends robotic animation with illusion-based gliding. The authors observed the recurrent use of individuals related to well-known terroristic attacks, including 9/11, the Crocus City Hall attack, and most recently, the Bondi Beach attack (Figure 6).

Figure 6: Examples of Viggle AI videos related to Crocus City Hall. 9/11 and the Bondi Beach terrorist attacks.

These videos are consistently characterised by visual indicators that display the number of victims associated with each attack (Figure 7).

Figure 7: Examples of AI-generated content highlighting the number of victims killed during the attacks perpetrated by the two individuals.

For instance, Figure 7 shows two videos portraying respectively Zahran Hashim (left) and Omar Mateen (right) reproducing the mentioned ‘rampage’ with numbers indicating the victims killed during the attacks perpetrated by them. Viggle AI was also used to produce videos featuring well-known jihadists and mujahedin figures, designed to appeal particularly to younger audiences on platforms such as TikTok (Figure 8).

Figure 8: Examples of memes created by using Viggle AI and the figures of media-famous mujahid.

Although Viggle AI appears to be the ‘creative factory’ where videos are produced, audiovisual material is also disseminated directly on the platform itself. By using keywords related to the Islamic State (IS) and/or individuals associated with the Salafi-jihadi ecosystem, it was possible to detect extremist content on the platform (Figure 9).

Figure 9: research results by means of using Salafi-jihadi-related keywords.

Specifically, there are videos showing terrorists performing the ‘rampage dance’ against backgrounds tied to attack sites, or against green-screens, enabling users to easily generate new content (Figure 9).

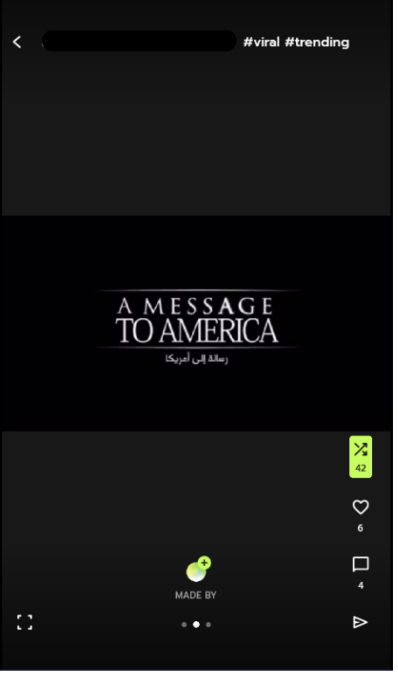

In particular, the authors identified the massive presence of images and/or videos related to the figures of attackers such as Omar Mateen, Zahran Hashim, and Muhammadsobir Fayzov, respectively, related to the 2016 Orlando, 2019 Sri Lanka Church and 2024 Crocus City Hall terroristic attacks. Most often, such audiovisual content is combined with the use of explicit hashtags referring to the Salafi-Jihadi environment and to the number of victims killed, without any type of censorship. Furthermore, the authors observed videos containing pro-IS institutional propaganda. For instance, a video related to the pro-IS institutional media outlet Al-Furqan has been detected (Figure 11).

Figure 10: A Viggle AI video created by means of combining a famous IS’s Institutional propaganda video with the figure of Jihadi-John.

In particular, the notorious “A message to America” video produced by the aforementioned official al-Furqan media house was used to create memes by superimposing the figure of the well-known ‘Jihadi John’, while leaving the IS media outlet logo visible.

Conclusion

At present, it is clear that Viggle AI, as well as other similar generative AI, represents a new frontier in the creation of spontaneous extremist propaganda. The platform plays a double function within the online environment. On the one hand, Viggle AI functions as a ‘creative tool’ for the development of alternative audiovisual material across the three extremist ecosystems. Hence, by using this generative AI platform, supporters of the three ecosystems can create an appeal to younger audiences, who are particularly active on social media platforms. On the other hand, due to the ‘social features’ provided by the platform itself, users have been seen to share the final AI-generated products directly on Viggle AI. Therefore, the platform functions as a repository of this type of content itself, not necessarily forcing its users to seek out the particular type of content on other platforms, such as TikTok.

Moreover, it is noteworthy that Viggle AI serves as a common denominator across the extremist nexus under consideration. By examining the audiovisual content produced by supporters of the three ecosystems, it is possible to observe a common willingness to idolise and glorify violence. Consequently, by means of using well-known, popular terror-related figures stressing the violent aspect, it is possible to generate a modern and young-appealing ‘ode to pure violence’, without there being any particular affiliation to the direct extremist cause represented by the three targeted ecosystems.

It should be noted that TikTok is focused on the dismantling of terrorist-related material on the platform through a “combination of technology, safety experts, and security professionals, alongside threat-detection partners.” Similarly, Viggle AI is designed to provide a safe space within the platform by assessing problematic and violent content.

Notwithstanding this, the type of content detected and analysed by the authors is, in some measures, harder to detect because it is not as explicitly violent as other types of extremist content present on social media. Platforms should therefore begin monitoring other relevant elements that can be used to identify this specific type of video: keywords, numbers, or places that reference an attack were frequently mentioned in the videos’ descriptions, as well as the modified names of extremists. In this respect, the aforementioned platforms could broaden their focus to identifying images related to attackers, often combined with other elements, that are instrumental in the creation of this particular and subtle form of online propaganda. Finally, much more focus should be placed on controlling how generative AI is exploited and on regulating the content it generates by a variety of stakeholders beyond the technology companies themselves.

–

Silvano Rizieri Lucini is a Senior Researcher at the Italian Team for Security Terroristic Issues and Managing Emergencies – ITSTIME. He specialised in Digital HUMINT and OSINT/ SOCMINT, oriented in particular on Islamic terrorism and right-wing extremism. He focused on monitoring terrorist networks (from clear to dark and deep web), with particular attention to new communication strategies implemented by terrorist organisations.

Alessandra Pugnana is a Research Analyst at the Italian Team for Security, Terroristic Issues & Managing Emergencies – ITSTIME. She specialised in OSINT, SOCMINT, and Digital HUMINT. Her research activities are focused on monitoring terroristic networks, with particular attention to nihilistic violence, right-wing extremism and its new communication strategy.

Grazia Ludovica Giardini is a Junior Researcher at the Italian Team for Security, Terroristic Issues, and Managing Emergencies – ITSTIME. She specialises in the application of Open Source Intelligence (OSINT), Social Media Intelligence (SOCMINT) techniques, and Digital Human Intelligence (Digital HUMINT). Her research activities are focused on monitoring Salafi-jihadi groups’ communication strategies and propaganda through various online platforms.

–

Are you a tech company interested in strengthening your capacity to counter terrorist and violent extremist activity online? Apply for GIFCT membership to join over 30 other tech platforms working together to prevent terrorists and violent extremists from exploiting online platforms by leveraging technology, expertise, and cross-sector partnerships.