Introduction

On June 29, 2020, YouTube removed 25,000 channels, including several prominent white supremacist figures such as David Duke, Richard Spencer, and Stefan Molyneux. While Duke and Spencer were generally well-known white supremacist figures, Molyneux specifically built his reputation on YouTube. The self-described philosopher has been labelled a cult leader spreading white supremacist ideas to millions of YouTube users, radicalising impressionable young men. The New Zealand Royal Commission also concluded that the Christchurch shooter watched and donated to Molyneux’s channel. Although watchdog organisations like the Southern Poverty Law Center extensively warned of Molyneux’s extremism, popular narratives about YouTube radicalisation still often focus on passive algorithmic pipelines and extreme cases of political violence. However, there is scarce empirical evidence on how audiences engage with problematic YouTube channels. YouTube’s current deplatforming efforts, by simply removing all data from public view, limit further critical analysis and understanding.

In this Insight, we present the key takeaways from an exploratory audience engagement study using an archival dataset of approximately two million YouTube comments from a ten-year period (2008 – 2018) on Molyneux’s YouTube channel. This rare dataset offers unique insight into an important moment in the growth of online far-right radicalisation—one currently invisible through the platform’s application programming interface (API). This piece aims to show the ‘everyday’ aspects of audience engagement with extremism on YouTube. To make our case, we first argue for a more participatory cultural understanding of YouTube radicalisation, highlighting active audience participation. We then present our methods and findings, revealing key participatory and discursive elements in Molyneux’s community. While Molyneux’s audiences express a wide range of opinions using various engagement tactics, we show how core audience members engage with extremist discourse from the perception of pursuing ‘Truth.’

YouTube Radicalisation

Over the past decade, YouTube has become notorious for its ‘radicalising power.’ Commentators such as the sociologist Zeynep Tufekci claimed that YouTube ‘may be one of the most powerful radicalising instruments of the 21st century.’ Although reporting is shifting, YouTube radicalisation is mostly attributed to sophisticated algorithms and how they expedite far-right extremism. However, recent research argues that such techno-centric analyses fail to account for demanding audiences: extreme political communities have incredibly high participation rates. Indeed, as audience studies have consistently demonstrated, viewers, readers, and listeners actively create meaning from and with the media they consume. As we have argued elsewhere, audiences in these communities “perceive themselves less as observers and more as participants in a conversation in which their voices matter”. It is thus worth asking how and why audiences engage with such extreme political narratives.

It might seem perplexing for outsiders of these YouTube communities that ideas about ‘scientific racism,’ eugenics and white supremacism would engage vast audiences. However, on YouTube, such ideas are often embedded within a specific vernacular culture, appealing to existing sensibilities by translating extreme political ideas to the platform logic to which users are already predisposed. For example, Becca Lewis pointed out that extreme political figures on YouTube use tactics like other popular influencers, engaging in interviews, guest appearances, debates, vlogging, and reaction videos.

Media studies have well documented how such practices are fruitful in building and maintaining audiences and establishing high levels of intimacy and parasocial relationships. YouTube increases this parasocial potential by affording audience engagement through features such as ‘likes’ and ‘comments’. In fact, YouTube’s technological facilitation of reciprocity is often seen as one of the platform’s core strengths. Extreme political figures on YouTube do not merely broadcast their ideas to a passive and receptive audience but develop them in dialogue with their audience, who present their own ideas, sources, and arguments.

Methodology

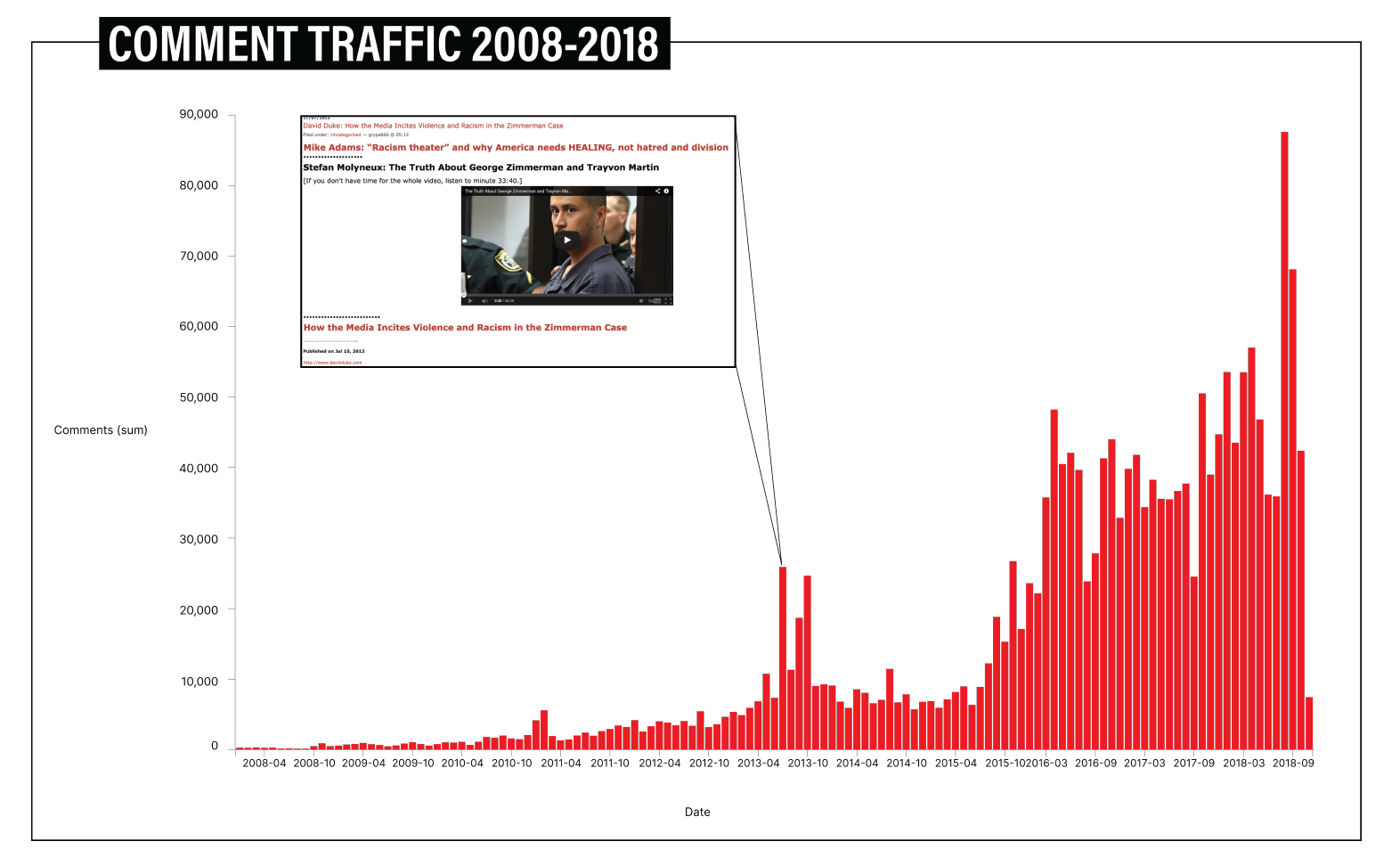

Using an archival dataset containing approximately two million YouTube comments from a ten-year period (2008 – 2018) on Molyneux’s YouTube channel, initiated by Dutch journalists at De Volkskrant and Correspondent, we could (1) analyse when Molyneux attracted active audience engagement and (2) follow how audiences engaged over time. The engagement mapping was done by plotting monthly comments and contextualising the emerging peaks. We used computer-assisted content analysis with 4CAT to detect important discussion points. On a micro-level, we specifically analysed the comments of six (anonymised) highly engaged audience members over time.

Operationalising the concept of ‘radicalisation’ is tricky. This is partly due to the divergent and sometimes vague interpretations of the concept from a diverse set of fields, including criminology, anthropology, social movement studies, and media and communications studies. When we talk about radicalisation in the context of this Insight and our study of ‘everyday’ extremism on YouTube more broadly, we follow the definition of radicalisation as “the process of developing extremist ideologies and beliefs”. As Peter Neumann points out, extremism does not necessarily imply violent acts or terrorism but may refer more broadly to adopting “political ideologies that oppose a society’s core values and principles”.

The Zimmerman Trial

Our first analysis aims to uncover important moments in the development of Molyneux’s participatory community through his YouTube comment section (Fig 1).

Figure 1 shows that Molyneux progressively attracted more participatory audiences, with a significant first peak of engagement occurring in 2013. This peak corresponds with Molyneux’s video analysis of the highly publicised criminal case and trial of George Zimmerman. Zimmerman, a neighbourhood watch volunteer, was charged with the second-degree murder of the 17-year-old African American teenager Trayvon Martin. In their book Meme Wars, Joan Donovan, Emily Dreyfuss, and Brian Friedberg argued that the Zimmerman trial was a key moment in developing white supremacist internet culture—a hypothesis we can underline with the findings. But while on 4Chan the Zimmerman Trial became part of reactionary meme culture, Molyneux’s video titled ‘The Truth About George Zimmerman and Trayvon Martin’ took a serious approach pointing out that mainstream media were not telling the truth and misreporting the facts (the video can still be watched on YouTube).

The Zimmerman Trial seems to be a turning point in Molyneux’s discourse and audiences—his community was more concerned with libertarian ideas before. The video was shared by David Duke and circulated among white supremacist audiences, which explains some of the overt racism in the comments. This point was also noted by an audience member who had been commenting on his channel since 2011:

This video about Zimmerman has drawn out some very savage comments from those who don’t seem remotely interested in the truth (really makes me appreciate the normal comments). I wonder if this video is being exposed via google recommendations to a wider mainstream audience.

The Zimmerman trial was one of the first moments that Molyneux’s community significantly grew outside its libertarian origin, with various audiences joining the online culture wars. As Stuart Hall theorised, audiences performed preferred, negotiated, and oppositional readings of Molyneux’s takes, and it is important to note that the audience is not monolithic but an aggregation. Audiences have different uses and gratifications for watching Molyneux, such as expressing anger, trolling, hate-watching, mocking etc., making it difficult to assess any direct effect of the video on anyone’s political beliefs. The current conception within political persuasion theory is that people have “stable, cognitively efficient predispositions that are commonly activated and reinforced – but rarely changed – by information disseminated by persuasion campaigns and within social media”.

That being said, the Zimmerman trial does reveal a key new discursive element, which cannot be found in any comment before the trial—race-baiting. While traditionally used to identify a racist act, the term was used by reactionaries around the Zimmerman trial to claim that mainstream media were clouding “logic and facts by appealing to emotion through false accusations of racial discrimination”. As we have argued elsewhere, Molyneux increasingly evoked this framing of a highly emotive media vis a vis him as an objective and rational “philosopher”, mediating between the “ugly truth” of objectivism and the wishful thinking of social constructivists. This discourse is highly important in his later discussions on scientific racism. As Molyneux stated in an interview with Dave Rubin, “there is almost nothing harder to absorb than this question of differences in IQ between groups”, juxtaposing his personal uncomfortableness with the ‘facts’ about the hierarchy of different eugenic ‘races’.

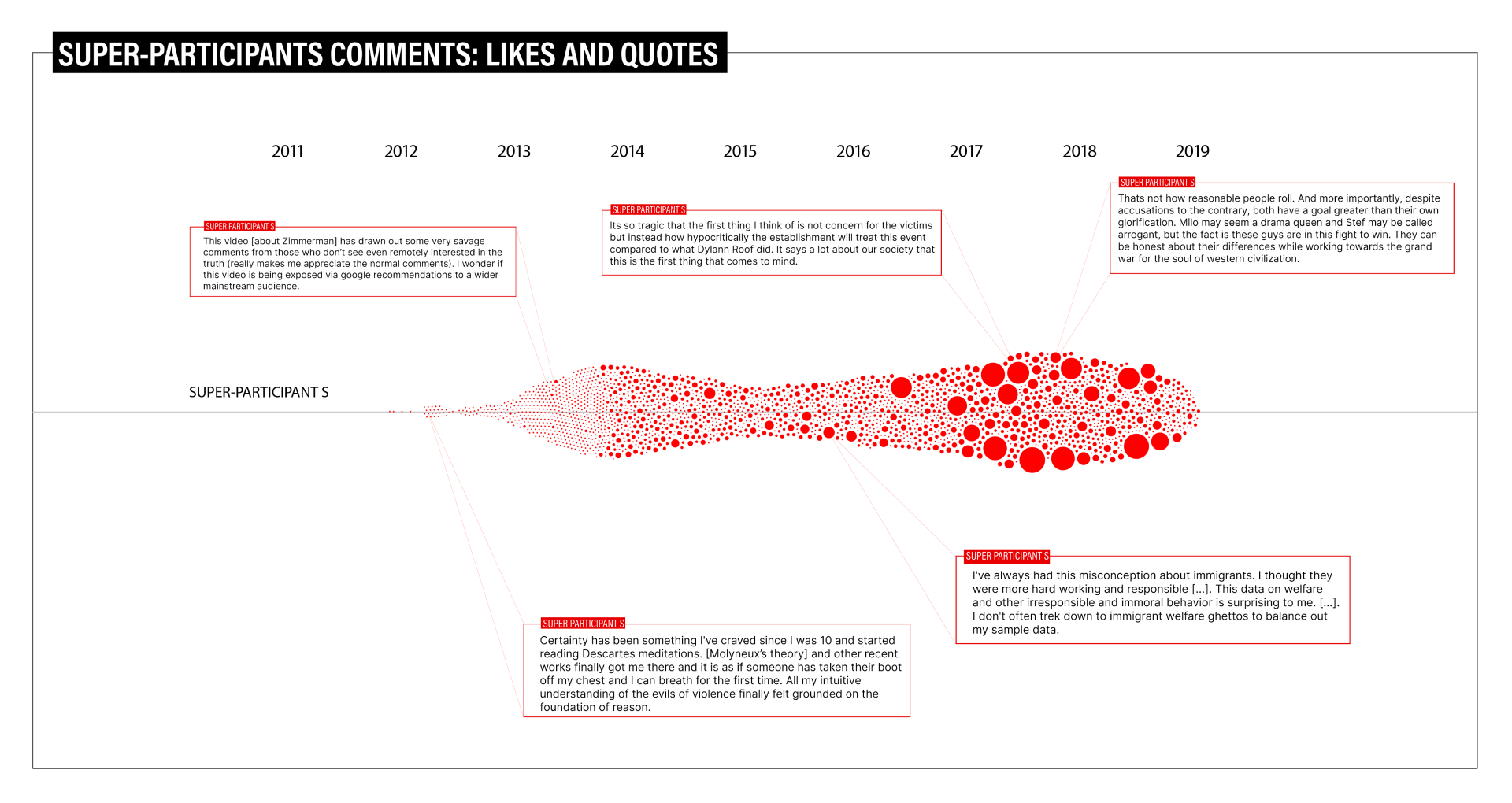

Audiences’ Pursuit of the Truth

The ‘logic and facts’ framework is useful for understanding certain radicalising dynamics in the commenting behaviour of long-time super-participants in Molyneux’s community. The key takeaway from close reading highly involved audiences’ comments is that they pack some extensive reflections. Molyneux seems to offer his community members an ‘objective’ framework that allows them to make sense of the world and their place in it. Using super-participant S’s trajectory here as an illustration, we see how this ‘objectivity’ becomes more extreme (Fig. 2).

Having started commenting in 2011, super-participant S wrote in 2012:

Certainty has been something I’ve craved since I was 10 and started reading Descartes meditations. [Molyneux’s theory] and other recent works finally got me there and it is as if someone has taken their boot off my chest and I can breath for the first time. All my intuitive understanding of the evils of violence finally felt grounded on the foundation of reason.

Molyneux offers this feeling of liberation and freedom through ‘logic’, ‘facts’, ‘reason’, and ‘Truth’. This philosophical framework allows audiences to change their minds on politically charged topics like immigration. For instance, super-participant S captures a reframing of immigration discussions in 2015 following a dispassionate analysis of the data:

I’ve always had this misconception about immigrants. I thought they were more hard working and responsible […]. This data on welfare and other irresponsible and immoral behavior is surprising to me. […]. I don’t often trek down to immigrant welfare ghettos to balance out my sample data.

Building onto the concepts of ‘logic’, ‘facts’, ‘reason’, and ‘Truth’, long-time members of Molyneux’s community underline their objectivist worldview by using deeply ethical frames where terms such as ‘evil’ and ‘immoral’—previously used for libertarian discussions—are applied to more extreme discussions. This type of sensemaking occurs across various manifestations of ‘online othering’ in Molyneux’s comment sections, including extreme political polarisation, racism, and sexism, amongst other forms of discrimination. The trajectory of super-participant S in our dataset ends with full involvement in the “grand war for the soul of western civilisation”.

Conclusion

Our exploratory study of the comments on Stefan Molyneux’s YouTube channel sheds light on a historical moment of radicalisation on the platform. Using close and distant reading techniques of audience engagement, our analysis provides a unique perspective on the radicalisation process that broadens the focus beyond algorithmic recommendations. We find that Molyneux’s core audiences participate in a perceived ‘intellectual’ discourse that differentiates their community from the mainstream media, the emotive left, and overt racism. We discovered an almost obsessive relationship with logic, reasoning, and truth as core values among Molyneux’s most engaged audiences, who frame extreme ideas as the ‘sad truth.’

The culture wars in which reactionary actors leverage facts, logic, and reason are expected to remain part of the digital space for some time. We would therefore align ourselves with scholars who question the assumption that the issues discussed above can be reduced to problems of misinformation as fact-checking. Rather, we need to attune ourselves to how platforms afford participatory far-right communities to construct their knowledge and build their worldviews in order to counter their persuasive rhetoric.

We know that such radicalisation dynamics are challenging to track and scale; however, now that YouTube has taken on the more active moderating role, giving external parties access to removed data should be the start. As YouTube tends to downplay the scale of the issue, it remains unclear how it will address the persistence of similar far-right discourse on the platform. We hope our analysis of Molyneux’s community from a period when YouTube treated ‘the alt-right’ as a ‘content vertical’ can shed light on how to address these issues in the future.

Daniel Jurg is a PhD Candidate in Media and Communication Studies at the Vrije Universiteit Brussel. He is part of the research unit on News: Uses, Strategies & Engagements within the research group imec-SMIT at the Vrije Universiteit Brussel and the Open Intelligence Lab at the University of Amsterdam. Working on a scholarship from the Research Foundation Flanders (FWO), he studies the history of alternative influence on YouTube.

Maximilian Schlüter is a PhD Student of Information Science at the Graduate School of Arts from Aarhus University, Denmark. His research project revolves around studying and understanding disinformative memes in far-right online spaces using feminist critical theory.

Marc Tuters is an Assistant Professor in the University of Amsterdam’s Media Studies faculty where he is affiliated with the Digital Methods Initiative and the Open Intelligence Lab, studying political subcultures at the bottom of the Web.