In the wake of the racially motivated terrorist attack at a Tops supermarket in Buffalo, New York in May 2022, it is crucial to assess the ways in which violent, white supremacist content presents on all social media platforms. The alleged shooter posted a 180-page manifesto detailing the racist and antisemitic beliefs he distilled from 4chan. Though platforms directly connected to the attacker are receiving increased scrutiny, the role of mainstream platforms like TikTok in promoting these violent ideologies is largely overlooked. The hateful ideologies which motivate attacks like the one in Buffalo are not unique to alternative platforms and message boards. As such, examining violent extremist trends and far-right discourse on TikTok is crucial to inform assessments of the current state of violent extremism online.

Successful moderation of hateful ideologies and violent extremist content online has long proved to be a deeply challenging problem for social media platforms. However, TikTok presents unique challenges given its explosive user growth in recent years as well as its particularly young user base. On TikTok, these ideologies can more easily permeate mainstream platforms, where they find new audiences susceptible to disinformation. TikTok videos haves the potential to reach millions overnight, and thus white supremacist and millitant accelerationist content can achieve unprecendented levels of exposure. TikTok’s recommendation algorithm is especially of concern because engagement with a singular hateful narrative has been found to result in the further recommendation of other hateful narratives. While TikTok receives much attention for its dances and memes, the proliferation of extremist content is often overlooked despite the amplification they receive on the platform. This article examines how the white supremacist and militant beliefs which fueled the racist massacre in Buffalo manifest on TikTok.

Though TikTok is a mainstream video sharing platform with relatively strict community guidelines, gaps in enforcement have allowed ecosystems with violent and extremist content to thrive. While TikTok’s Community Guidelines, which ban content that “praises, promotes, glorifies, or supports violent acts or extremist organizations or individuals,” are fairly comprehensive and cover a wide range of hateful and violent content on the platform, the moderation frequently misses violations and fails to respond to ban evasion tactics. TikTok’s moderation gaps surrounding neo-Nazi Paul Miller, also known by his username ‘GypsyCrusader’, is a useful recent example of the inherent challenges facing the platform. Miller, currently serving a 41-month sentence for charges stemming from his illegal possession of a firearm, was featured in a video which garnered over 5 million views and 700,000 likes that remained on the platform for more three months before being removed following reporting by Media Matters in July 2022. While TikTok had previously banned both his name and username, the video’s hashtags were intentionally misspelled as ‘Gypsy Crussader’ and ‘Paul Millier’ in an effort to avoid content moderation efforts. In response to public scrutiny, TikTok banned the two misspellings included in the aforementioned viral video but the platform did not address any of the other alternative spellings.

A single TikTok is best understood as a three dimensional meme composed of video, audio, and text. Two identical videos with different audios layered beneath them could have wholly contrasting meanings. Similarly, by changing the video or text, users create new memes with new messages. Then, these memes circulate on the platform largely via TikTok’s unique recommendation-based algorithm which facilitates their spread and continued evolution. This design is what facilitates the dance trends and fun memes for which the platform is known. However, this unique platform design combined with inadequate moderation has also facilitated the shaping and sharing of far-right and white supremacist memes. The app seemingly serves more as an amplifier of hateful ideologies rather than a source, with extremist messaging and memes frequently migrating to TikTok from less regulated platforms like 4chan, Telegram, and Discord, where their violent aspects are sanitised for the purpose of ban evasion and appealing to a more mainstream audience. Instead, it contains a virtually endless supply of memes which echo the Buffalo shooter’s violent ethnonationalist, eco-fascist ideology.

TikTok isn’t usually a space where extremist narratives develop so much as one in which they are reiterated. As such, in the aftermath of the Buffalo attack, it was difficult to find significant amounts of TikTok content which explicitly endorsed the shooting itself (though some users did quickly turn to false flag narratives). Instead, TikTok users generally celebrate terrorists through engagement with larger, ongoing memes: Entire accounts are dedicated to making memes and edits of terrorists such as the Christchurch shooter, the Las Vegas shooter, and the Columbine shooters. In the wake of each new incident like the racist massacre in Buffalo, as media coverage, political reactions, and legal prosecution proceed, monitoring TikTok-based celebration of the attacks will remain important. The glorification of perpetrators of violence is both a motivating factor for future perpetrators seeking celebrity and legacy as well as a component of the accelerationist model which aims to inspire similar acts of violence.

Figure 1: Content on TikTok which celebrates notorious mass shooters.

Anti-Black Rhetoric

TikTok’s algorithm serves videos which promote racist narratives linking Black people to violence and crime. Similarly to the shooter’s fixation on crime rates by race, TikTok videos such as the one below with over 765,000 views, attempt to correlate crime rates with the percentage of African Americans in the US.

Figure 2: A TikTok video with over 750,000 views promotes racist and misleading tropes about crime rates.

White Nationalist Narratives

Ethnonationalism and white supremacy narratives promoted in the shooter’s manifesto also circulate via TikTok’s meme format. For example, it is easy to find videos and usernames featuring the number 14 or the ‘14 words’, a reference to the popular white supremacist quote: “We must secure the existence of our people and a future for white children.” Though the platform displays a community guidelines message when users search for ‘14 words’, this attempt at moderation does not significantly hinder the spread of the narrative on the app. While it stops users from searching for posts with ‘14 words’ in the caption, it does not address how the dog whistle emerges organically on the platform’s For You Page (FYP) such as in embedded text or usernames.

Additionally, narratives about the degradation and collapse of modern society are common, and at times conveyed often through ‘schizoposting’, an intentionally overwhelming and hypnotic editing style for short form videos. The Buffalo shooter’s supposed belief that equality will never work as well as his calls for the murder of local drug dealers are narratives that have been consistently circulating in far-right TikTok ecosystems. Below are examples of each of these trends found during ongoing monitoring of TikTok.

Figure 3: White supremacist symbols, narratives, and dog whistles in TikTok videos. Two videos reference the “14 words,” one includes a sonnenrad, and another includes antisemitic and Islamophobic language.

Environmentalism and Eco-fascism

The shooter’s claims about the environmental harms of industrialised and ethnically integrated society echo what is perhaps one of the largest extremist currents on TikTok. Support for the ‘Unabomber’, Ted Kaczynski, is a popular sentiment on the platform and a consistent theme within neofascist accelerationism on other platforms. Though TikTok banned the hashtags ‘TedKaczynski’ and ‘TedPilled’, the hashtag ‘Industrialrevolutionanditsconsequences’ (a quote from Kaczynski’s manifesto) has 6.1 million views while ‘Industrialsocietyanditsconsequences’ has over 800,000 views and routinely features promotion of eco-fascist and anarcho-primitivist content. While the popular meme itself might not be considered inherently harmful or extremist, it gives insight into the popularity of Kaczynski on the social media platform. It seems that environmental concerns, particularly among young people who compose the majority of the TikTok user base, are blending with the ethnonationalist narrative of societal decay.

Figure 4: Content celebrating Ted Kaczynski.

Siphoning Users Off-Platform

It is also worth noting that TikTok’s algorithm serves to connect users to radicalising content which extremists then can utilise to form communities in less moderated spaces. Once an account is established and has an audience, it can siphon users off into a “more private place to converse.” These are spaces where more hateful and violent content can be circulated without fear of moderation and the self-censorship required on TikTok falls away. These communities are often designed to facilitate radicalisation.

Figure 5: A TikTok account using a video to promote its Discord server as a “more private place to converse.”

Celebrations of Violence

Videos which either blatantly or ironically celebrate violence can also be found on TikTok. These videos often feature the relationship between violence and media attention, either by acting in ways which confirm the ‘extremism’ label or revelling in watching the media react to extremists’ actions.

Figure 6: Extremist content on TikTok which celebrates violence.

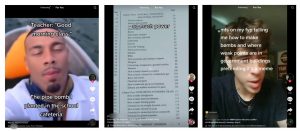

Homemade Weapons

The Buffalo shooter’s manifesto contained numerous pages on weaponry and tactical gear. It is worth highlighting that TikTok contains a concerning amount of content which serves similar instructional purposes. The platform contains videos which give explicit instructions for constructing homemade weapons as well as videos which comment on the popularity of these dangerous DIY TikToks. Accounts like the network recently reported on by Media Matters which garnered millions of combined views, teach users how to make dangerous – and often illegal – weapons. While the Buffalo shooter may have been more concerned with guns, the parallel with TikTok users’ inclination towards sharing information about weapons is still worth noting.

Figure 7: TikTok videos either providing instructions on homemade weapons or referencing their popularity on the platform.

Conclusion

TikTok’s algorithm continues to recommend extremist content to its users – it’s even a meme – and bad faith actors continue to exploit this vulnerability. Users joke and exaggerate their experiences opening TikTok and immediately being bombarded with everything from ‘schizo’ edits, misogynistic and manipulative dating advice, and Patrick Bateman montages to blueprints of government buildings, advice for building weapons, Ted Kaczynski lectures, and various brands of ethnonationalism. Content that aligns with components of the Buffalo shooter’s manifesto is so pervasive on TikTok that the pervasiveness itself has become a meme.

Figure 8: Users discuss the presence of far-right content on their TikTok FYPs.

Text:

Image 1: “opening tiktok to see 4 patrick bateman edits, 13 schitzo post slideshows, 3 sigma male nightly routine, book recommendations on how to manipulate women, the blueprints of the supreme court, 7 edm nightcore gym motivation edits, 300 breaking bad and beserk memes, and instructions on how to build a nuclear bomb using fire alarms.”

Image 2: “tiktok after recommending me 3 sigma male night time routines, 4 nightcore gym edits, 25 right authoritarian eastern europeans promoting militarism propaganda, 9 patrick bateman guide to manipulate and murder women, a kosovo nationalist, a blueprint to a french nuclear bunker and 40 cryptobro mindset quotes”

Image 3: “opening tiktok to see 12 Patrick Bateman montages, 5 sigma lifter quote, a Serbian nationalist anthem, a dozen guys in crusader armor, a comprehensive guide to gaslighting women, the comprehensive lore behind Megamind getting zero bitches, 8.5 Better Call Saul memes, a schizosphrenia advocate, Beserk audio over a guy doing pullups, and 2 Ted Kaczynsci lectures”

It seems difficult to more succinctly describe the far-right ecosystems on TikTok than these users do in these memes. The apps’ algorithm continuously pushes these narratives out to users in meme and video format. It is clear that TikTok’s design facilitates the dissemination and amplification of violent extremist ideologies. It is also clear that TikTok’s design shapes unique cultures on the platform. While the content of the messaging may not originate on TikTok, the delivery of the messages is unique. Extremist narratives echo those off-platform, however the mechanisms of transmission are novel and poorly understood.

Given the novelty of the platform, research into the effects of the delivery of hateful ideologies via short-form video is lacking. In particular, research into the relationship between highly edited, fast-paced ‘schizo’ content and radicalisation is needed. Additionally, transparency from TikTok regarding its moderation efforts as researcher access to platform data is imperative to better understand and address this problem.

Abbie Richards is a TikToker and TikTok misinformation researcher. She specialises in understanding how misinformation, conspiracy theories, and extremism spread on TikTok and creates educational content which explains these complex issues to a wider audience. Abbie is a second-year master’s student studying the intersection of misinformation and climate change and is a research fellow at the Accelerationism Research Consortium.