Islamic State material, in any form, not least its raw and explicit form, has no place on the open web. It certainly has no role to play on Facebook, with accounts openly advertising their material and easily identifiable by human researchers. The existence of such material points to a possible issue of either technical or human solutions currently in place to prevent Islamic State from (re)establishing its digital foothold.

The damage and harm done by Islamic State has earned it the status of being forever hunted, isolated, and removed from whatever host platform it is on. The damage done by leaving it unchecked is too large and the risk is too high. Time and again, we see the lethal results of individuals radicalised by the suave and sophisticated media campaign run by Islamic State.

There is good reason why the Caliphate invested significant logistical planning into ensuring the digital battleground of social media was treated with the same intensity as their ground forces were through Iraq and Syria in 2014. The result was that social media, in 2014/15, was awash with their content, often urging (and resulting) in violence in different nations. Islamic State made the strategic decision that a successful access point to the widest possible pool of potential recruits can easily be found via social media. The Islamic State leadership probably understood this quickly and capitalised on it to lethal effect.

Islamic State attacks that took place in Paris, Brussels, London, and Vienna all had an online component. It took these acts of violence away from being isolated incidents of terrorism, to a coordinated global campaign linking its entities across countries and time zones. It is for this reason, and many more, that the clamp down on all Islamic State media ‘wilayah’ was so urgent. It was why Facebook, Twitter and others began investing in more serious content moderation processes and why, justifiably, society demanded this of them.

With this backdrop, the question must be asked as to how, at this moment, Islamic State content still exists on Facebook. Accounts set up with the explicit purpose of spreading IS content are established and continue to share official IS releases. In time, these accounts are likely to be removed once identified, but this can take weeks or months. By that point, the material is often already accessed and ingested by the end-user. The strategy employed by these IS-supporting accounts shows awareness of this vulnerability, by providing links to other accounts doing the same thing. They also provide the raw material so that the end-user can copy and keep it elsewhere. They are running an effective insurgency campaign aimed at supplying virtual arms to budding extremists globally.

This is also why a global moderation process, both technical and human, must have a global standard in understanding the problem. Although tracking the origins of such accounts is always a challenge, it is worth noting that they are global in nature, some claiming to be UK-based and others not giving an indication of provenance. Moderator guidance anywhere in the world needs to reflect the idea that all illegal Islamic State content cannot have a home on the Facebook platform.

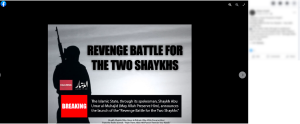

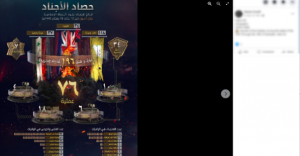

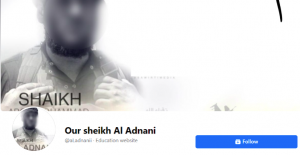

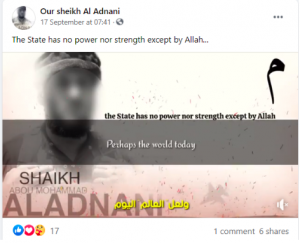

At the time of writing, dozens of Islamic State-supporting Facebook accounts are operating and linking to one another. The material on these accounts is openly branded as Islamic State, often with wording and images promoting IS material and figures. One Facebook account included an image of the recently released campaign titled ‘Revenge of the two Shaykhs’. Other accounts share images with the IS emblem and logo in their photos and profile pictures. The images below have now been removed by Facebook:

From an outsider’s view, it is difficult to identify why this material can sit on Facebook for any period of time. Some of the imagery appears to be untampered with and therefore should be subject to Facebook’s existing disruptive digital technologies. Similarly, the symbols, wording and flags associated with this imagery remain the ones originally associated with Islamic State releases from 2014 onwards.

The problem also extends to high-profile Islamic State activists. One Facebook account, active in April 2022, had chosen to place ‘Jihadi John’ as its profile picture. The photo was taken from an article written on Jihadi John, and when reverse-image searched came back with clear results as to who it was. Others have chosen those who have been lauded and praised by Islamic State as suicide bombers or fighters.

If the existing algorithms and technology are so far struggling to pick this material up, it bears the question as to how effective these automated tools are in this fight against terrorist content. We know that a heavy emphasis is placed on users reporting content to be reviewed, but this must be met by effective proactive measures seeking out and removing illegal content generated by terrorist organisations. If human researchers are able to identify these accounts, technical solutions should at the very least be able to do the same.

This occurrence is also not unique to now. In April 2021, a series of interlinked accounts also were generating and sharing Islamic State branded material for themselves and their followers. The method and means of distribution seemed to match the current set-up; one account which had thousands of friends was used as the central node to share Islamic State branded material. Often this account would tag dozens of these ‘friends’ to share and alert them of the latest material it had gained access to. Interestingly, this technique ensured the quickest and easiest way to distribute the material. All screenshots below have been removed by Facebook.

In April/May 2021, activists set up a page commemorating the former spokesperson of Islamic State Abu Mohammad al-Adnani. The page was titled “Our Sheikh Al Adnani”.

Failure in this arena comes at a real-life cost. Therefore, establishing effective programs to counter this dynamic digital threat is critical. We know that we are facing a dynamic and constantly evolving force, which actively and maliciously seeks to circumnavigate any legal or other barriers in their way. While positive steps have been taken for several years in this field, the measures and resources available to Facebook are greater than that of Islamic State or any other terrorist group, and they ought to be better prepared and able to find, isolate and remove content that has no place in modern civil society.

In our current digital age, the importance of these measures cannot be underestimated. Terrorism lives and breeds online, with real-world consequences. If we are serious about countering modern-day threats, the tools in our collective armoury need to be sharp and constantly updated. Facebook not finding accounts promoting Islamic State on their platform cannot be a reality that society readily accepts.

CST has been in contact with Facebook’s Counterterrorism and Dangerous Organisations team who have been the front line in this defence against an Islamic State presence on Facebook. There can be no question that these teams and their work deserve more funding and attention to ensure that Facebook is not seen as a safe space for terrorist content.

As well as more resources, a change in mindset is necessary. If Facebook, with their extensive resources, people, and technological expertise are not finding this material quickly and removing it as soon as it is posted, this might be down to its leadership being unwilling to invest in the resources that permit it to do so.