In a recent interview with The New York Times, Substack CEO Chris Best described the email newsletter platform as an “alternate universe on the Internet.”

Indeed, the site has quickly become a haven for independent media and viewpoints diverging from mainstream thought. But as the platform has grown amid lax content moderation, it has also become a hotbed for misinformation and extremist discourse, especially as other platforms push away their own fringe actors.

Now, there is another label more fitting of Substack’s position in the information ecosystem and current trajectory: alt-tech.

This has already been acknowledged within fringe communities. As early as 30 July 2021, WikiSpooks (a wiki for conspiracy theorists) classified Substack as alt-tech, alongside Rumble, Telegram, Gettr, and others.

Social media intelligence agency Storyful analysed content mentioning Substack across alt-tech platforms and over 9,000 Substack posts scraped from 100 unique accounts (spreading conspiracy theories, false claims and/or highly extreme material, e.g, the QAnon movement) which revealed a sequential process by which the platform has become an alt-tech hub.

As content moderation across social and digital media pushes extreme actors into a more decentralised framework, Substack is re-centralising fringe content to its platform in what one anti-vaxxer has termed “The Substack Era.” Furthermore, the site is actively promoting itself for this approach. Extremists and those driving misinformation have taken note, frequently citing the platform’s moderation policies as a primary factor for joining. Once established, these influencers not only promote their own content across social media, but also Substack itself as an accommodating platform. Accordingly, a chain migration is occurring, with like-minded fringe influencers seeing their counterparts’ success translating into significant influence and revenue, and following suit.

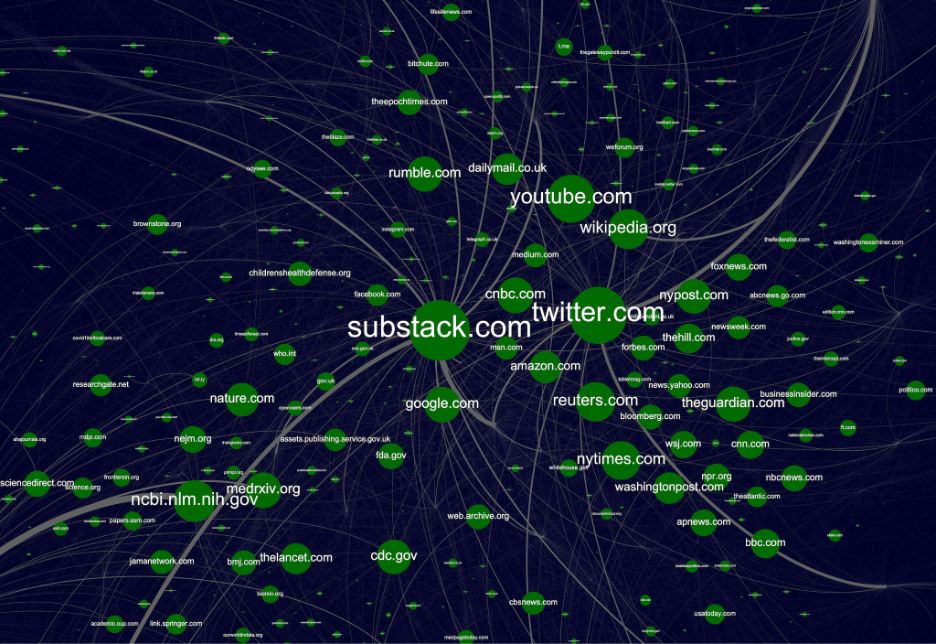

Above: Top domains shared by 100 Substack influencers show heavy reliance on platforms and legacy media, as well as how COVID-19 misinformation leans heavily into authoritative sourcing. A fuller, HD version of the above is available here.

Platform for the De-Platformed

Substack has long desired to establish itself as an unmoderated forum of independent thought. Its status as a platform for the de-platformed is baked into its identity and serves as a key means to attract highly influential writers.

Bari Weiss and Andrew Sullivan, for instance, view themselves as having been pushed out of leftist-dominated intelligentsia—a view vaguely reminiscent of those de-platformed from sites like Twitter. Although such writers still dominate Substack, fringe actors also occupy a sizeable influence, which is being felt across broader social media. From 30 September 2021–31 March 2022, 17% of the 10,000 most engaged posts across mainstream social media (Reddit, Twitter, and Facebook) using the substack.com domain came from the 100 fringe influencers examined in this study, according to data from BuzzSumo.

It must be noted that this group of Substack accounts is not representative of all fringe elements on the platform, and only covers accounts which were found to spread multiple conspiracy theories, false claims, or highly extremist material within a cursory scan of their content. Yet these include four influencers in Substack’s own top 25 politics newsletters—COVID science skeptics Alex Berenson, Dr. Joseph Mercola, and Steve Kirsch—who have each spread a significant volume of COVID-19 misinformation, as well as Glenn Greenwald, who is promoting numerous false and unsubstantiated Ukraine biolabs conspiracy theories.

Substack in the Alt-Tech Ecosystem

Storyful’s analysis of 100 accounts found that many influencers de-platformed for driving COVID-19 misinformation moved to Substack and now freely proliferate on the platform. There, they have continued to spread false narratives around COVID. The platform also serves as a playground for some of the most influential extreme actors to spread conspiracy theories, particularly high volumes of misinformation around the 2020 US election which is continuing. White power narratives are less influential, yet present, with white supremacist group Europa Invicta free to promote its ideology while others push anti-semitism and conspiracies around white genocide and the Great Replacement theory.

Unmitigated extremist actors on the platform are also growing their influence within fringe communities, effectively becoming thought leaders among like-minded actors. Substack has recently become rife with conspiracy theories about alleged Ukrainian weaponised biolabs. The impact of this is mainly felt in how other fringe influencers and sites use content from Substack to drive misinformation, such as on Rumble and sites like InfoWars. In other words, while one’s favourite fringe influencer may or may not be on Substack, they have likely been influenced by those on the platform, either directly or indirectly.

In fact, while the Ukrainian biolab conspiracy theory started on Twitter by a now-suspended account, Clandestine has ported his Telegram influence (58k+ subscribers) to his new Substack page. As he wrote in his inaugural post on 2 March 2022, “Hopefully, with enough subscribers, I’ll be able to financially commit to providing content to you all full-time!” The account already has ‘hundreds’ of subscribers, according to his Substack, earning him anywhere from around $800 to $4,500 in monthly income. He is well on track to spread conspiracy theories on a full-time basis, assuming Substack maintains its policy of limited moderation.

This is important because Substack is not just an outlet for misinformation and extremism but also a key platform for its monetisation. The site has been highly successful in monetising online influence, which has contributed to its ability to attract and retain talented writers. It is difficult to say to what extent influencers on the platform are selling disinformation only to generate subscription revenue. Regardless, several are earning significant sums, allowing them to sustain operations and encouraging profit-motivated actors to migrate. The Center for Countering Digital Hate has conservatively estimated that Substack generates at least $2.5 million in revenue from anti-vaccine newsletters per year.

Conclusion: An Inevitable Tipping Point

When Substack doubled down on its commitment to nearly unfettered free speech amid recent pressure in January 2022, it affirmed its status as the latest major alt-tech platform. The language used by the platform’s leaders when laying out its vision of content moderation are reminiscent of how platforms like Twitter and Facebook messaged, such as arguing for pure free speech in response to potential abuse. But Substack is different. While mainstream platforms are meant to capture the broadest possible audience and cohort of content creators by design, Substack’s core identity is providing a purely independent forum—especially for those who do not fit into the mainstream.

Recognising this and classifying the platform as alt-tech matters because it points to how the site is likely to evolve. A tipping point is inevitable as extreme actors continue to migrate to Substack due to increased moderation elsewhere; the platform’s stated policies; and the success of fringe influencers on the platform. When this comes, many of its writers and readers will likely leave due to concern over using or supporting a site saturated with extremism and misinformation. Such an exodus combined with an observed growth of extremism on the platform, could turn the site into a purely fringe platform for low-quality, harmful information. At the same time, active, robust moderation could compromise the platform’s model of independence, which is built out of a common hunger among its readers for unconventional, nonconformist views and a push to move away from mainstream thought.

Still, while free speech and a free press are cornerstones of American democracy, leaving moderation decisions almost entirely to users is unsustainable and potentially dangerous. Substack is already host to a large volume of content questioning the 2020 US election outcome. With continued attacks on Georgia Democratic gubernatorial candidate Stacey Abrams for her role in facilitating alleged fraud, locally-focused conspiracies are poised to appear on the platform in 2022. Such continued discourse has the potential to further weaken faith in American institutions and democracy. Depending on the actual election results, this may or may not be the tipping point, but such an event is the inevitable reality of loosely moderated user-generated content.

Nathan Doctor is Intelligence Lead at Storyful and previously served as an OSINT analyst for the United States Department of Homeland Security.