Despite the often-decentralised nature of extremists and violent extremists in online spaces, there is clear evidence that they attempt to coordinate platform migration and provide instructional manuals on how fellow sympathisers should maintain operational security measures as they branch out onto other applications. Encountering new platforms naturally comes with a learning curve which translates to the risk of making potential mistakes if a user is unfamiliar with the security protocols and user terms of the platform. To reduce op-sec errors, users share messages containing security tips, create graphics addressing potential pitfalls of various platforms, and post lengthy guidelines on how to safely set up accounts on other apps via instructional PDFs, articles, and videos. Additionally, the wide array of available messaging apps has resulted in extremists simultaneously gravitating towards multiple platforms with varying objectives intended for specific apps.

Perhaps the clearest examples of such activities come from Islamic State supporters – although there have been recent instances of extreme right, white supremacist, and conspiracy milieus utilising similar strategies. This article will primarily focus on IS supporters’ online activities as a case study but will also touch on the extreme right and white supremacists.

Islamic State Supporters

In November 2019, Europol initiated its most successful crackdown on pro-IS Telegram ecosystems resulting in further decentralisation of pro-IS communities onto more obscure apps. Regarding the Europol campaign, a mass data report by Amarnath Amarasingam, Shiraz Maher, and Charlie Winter “demonstrates the hugely debilitating impact of the 2019 Action Days” which “represented a serious, sustained, and existential threat to both the group [Islamic State] and its supporters.” Before the crackdown since 2015, Telegram had remained IS supporters’ platform of choice due to its encryption capabilities, file sharing abilities, overall user-friendly structure, and absence of content moderation encountered on other social media sites such as Twitter and Facebook. However, following the crackdown, supporters dispersed onto more niche spaces while also displaying continued dedication towards maintaining a presence on Telegram. As Michael Krona observes, “…the strategy of originating from one central hub (Telegram) for uploading content and then via invites and links directing users from other platforms…has been substituted by a more decentralised structure spanning over a large number of platforms.”

Although decentralised communication characterises this semi-vague grass-roots coordination, established collectives dedicated to online security provide multi-lingual resources trusted by wider pro-IS communities. Electronic Horizons Foundation, a group created to spread “security and technical awareness”, provides downloadable extensive step-by-step guides accompanied with visual instructions. In order to demonstrate the vast comprehensive nature of the guides, a list of thirty EHF Arabic, English, and French resources I archived in 2020 from EHF’s website (which is no longer up) are listed below:

- AF-WALL

- Basic Protection in IOS System

- Conversations

- Data Collection Techniques

- EDS

- Encrypting files on Android

- Explanation of Exiftool to Delete File Metadata in Windows Operating System

- Gajim

- General Application Permissions

- Google Play system

- I2P

- KeyLogger

- Lineage

- NVISO

- Ooniprobe

- OS Debian

- Pidgin

- Riot App

- Scan Suspicious Links

- Signal

- Sudo

- Talkatone

- Telegram

- Threema

- Twitter security settings

- Ultimate Guide of TOR

- VeraCrypt Program

- VPN Services for Android

- Zom

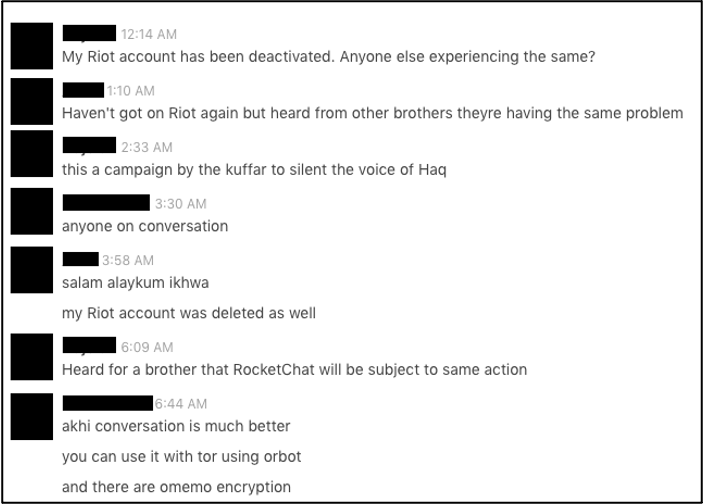

The multi-lingual focus on IOS/Android guidelines, TOR browsers, file encryption, and data collection techniques (presumed on the part of IS supporters to be used by intelligence agencies) in addition to instructions on how to create new accounts demonstrate an all-encompassing attention to security protocols. Amidst this more organised dissemination of material, informal discussion between IS supporters on the strengths and weaknesses of platforms continues – these conversations were particularly prominent following the 2019 Europol initiative:

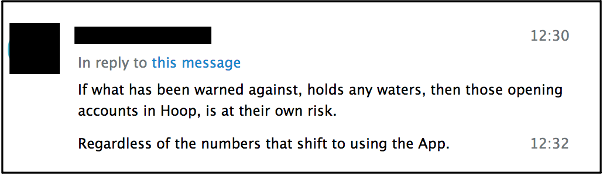

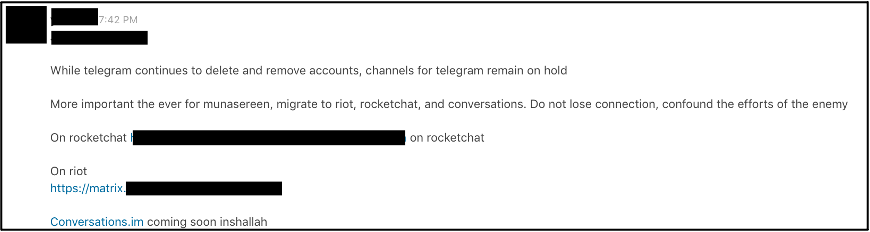

As demonstrated in the above screenshot from a private IS chatroom, users will employ layered security measures and the ability to access an application via TOR, for example, adds to the appeal of a platform. In addition to security guidance, pro-IS networks have an increased emphasis on staying connected amidst continuing waves of account bans and avoiding platforms they view as problematic. Methods of connecting users and discouraging usage of certain apps include:

- Using Telegram and RocketChat as information hubs to distribute instructions/advice on which applications to use and avoid [Hoop particularly received negative reactions but, as noted by Suraj Ganesan, there is a continuing noticeable presence of South Asia-focused channels still operating on the platform. IS Hoop channels also frequently cross-post

Telegram links/bots/usernames as well as justepasteit and other file-sharing urls.].

- Providing the contact information for a single user across multiple apps who will then assist newcomers in connecting with various pro-IS spaces.

- Sharing generic IS content links to chatrooms and channels across platforms.

- In anticipation of bans, creating multiple backup accounts and sharing this information internally with trusted ‘brothers’.

- Creating nondescript (as in not overtly pro-IS) bots solely dedicated to link-posting.

- Switching between apps where one (ex. Telegram) is for generic conversation and the other (ex. Threema) is used to discuss more sensitive topics.

Another method includes switching to encrypted chat mode on Telegram and setting a self-destruct timer for the chat log.

- Creating different usernames for each platform in order to make it more difficult to track and individual across platforms – the downside of this tactic is that it also makes it harder for sympathisers to find each other across apps since they will not recognise the username unless they have previously shared it with one another.

Others prefer to keep the same username because of this potential hurdle.

Beyond attention to security protocols, efforts by users to maintain connections across platforms, and preserve continuing circulation of propaganda, younger pro-IS supporters are creating subcultures centred on memes and aesthetics oftentimes associated with the alt-Right. Moustafa Ayad and his team note the purpose of such an approach, “Since the loss of the Islamic State’s physical caliphate two years ago, ISIS supporters have been grappling with their diminished relevance online, reinventing their propaganda through a range of bizarre strategies from pornographic ultraviolence to meme-based shitposting.”

Tiktok, an app that tends to draw in younger demographics, contains ecosystems of pro-IS content that remain undetected by moderators. After following a handful of overtly pro-IS accounts, the algorithm began directing me towards similar accounts with well-known IS members, IS flags, and still images from propaganda videos set as their profile photos. IS TikTok users create shitposts that are reminiscent of the alt-Right vibes described by Ayad. They also post ISISwave aesthetics, autotuned anashid, and short clips of official IS propaganda among other types of messaging. Notably, the comment sections of these TikTok videos contain direct links to pro-IS Telegram where supporters intend to reconnect and directly converse in a more private manner as opposed to commenting back and forth in the TikTok conversation thread. Some accounts maintain public profiles with innocuous hashtags such as #islam and #قط (cat) while others remain private.

More recently, online IS supporters drew some attention when they established a presence on GETTR displaying an openness to, as Chelsea Daymon explains, “trying any platform and seeing how friendly it is to them.” In addition to the nature of trolling a conservative app, there is a strategic advantage in exploiting a platform that is encountering “difficulties in balancing its free speech ethos with growing demands to stop terrorist-related material…”

Apps such as Telegram, Element, and RocketChat often gravitate towards closed ecosystem structures unavailable to a general public while TikTok and Instagram serve the purpose of developing visual-based aesthetic themes. Large pockets of supporters continue to exist on larger platforms such as Twitter and Facebook but alternative apps with encryption capabilities remain a safer choice in terms of account longevity.

However there is a downside to placing emphasis on alt apps due to decreased accessibility to general audiences. Reliance on closed echo chambers limits opportunities for public outreach and the distribution of propaganda messages to wider audiences beyond supporter networks. Nico Prucha documented a pro-ISIS media source, al-Wafa, which published a statement cautioning about the “strategic error” of limiting online activities to Telegram. It directed supporters to “return to Twitter and Facebook, for our missionary operations have greater reach on these platforms. Those we intend to influence are not on Telegram…” In another example, a six-page statement from the unofficial Al-Azm Media Foundation titled “The Mobile Bomb” made rounds on Telegram with translations in both Arabic and English. Echoing the concerns presented in al-Wafa, it complained about supporters operating within the comfort zone of Telegram but went further in directly deriding supporters for their “laziness.” It also advised supporters to “make Telegram as a platform to prepare and a bridge to cross over to Twitter and Facebook” and not “make it [Telegram] a prison for yourself and your skills.” Notably before the Europol campaign, bots and channels, including the well-recognised “Bank al Ansar,” focused on disseminating hacked Twitter/Facebook/phone number accounts for IS supporters to use.

In summary, apps serve a wide variety of purposes and their significance to IS supporters is determined by decentralised informal community consensus in combination with ambiguous guidance from semi-centralised collectives like the Electronic Horizons Foundation. Nonetheless, the wider concerns of these various pro-IS communities distinctly coalesce around a set of prominent concerns: security, connectivity, and a continued output of both official and unofficial propaganda. Even through waves of deplatforming, striking a balance between remaining on the familiarly comfortable closed apps versus expanding outreach to a wider public continues to be a point of tension for the ‘virtual Caliphate.’

On a final note, despite this article’s focus on virtual pro-IS spaces, taking into account how on the ground realities in combination with online anti-terrorism campaigns (such as Europol’s November 2019 campaign) affect official propaganda online outputs provide crucial insights on the intertwining nature between virtual realities and the ‘real’ world. For further reference, see “Censoring Extremism: Influence of Online Restriction on Official Media Products of ISIS” by Kayla McMinimy, Carol Winker, Ayse Lokmanoglu and Monerah Almahmoud.

Brief Observations on the Extreme Right and White Supremacist Online Spaces

Extreme right and white supremacist supporters do not face the same level of restrictions and deplatforming efforts as pro-IS supporters. That being said, Maura Conway succinctly explains the limitations of such comparisons which can be referenced here. Shifting focus towards cross-platform behavioural patterns, a brief inclusion of how these communities respond to moderation efforts and instruct their own adherents on online safety measures offers some preliminary insights concerning improvisation and adaption by extremists beyond IS.

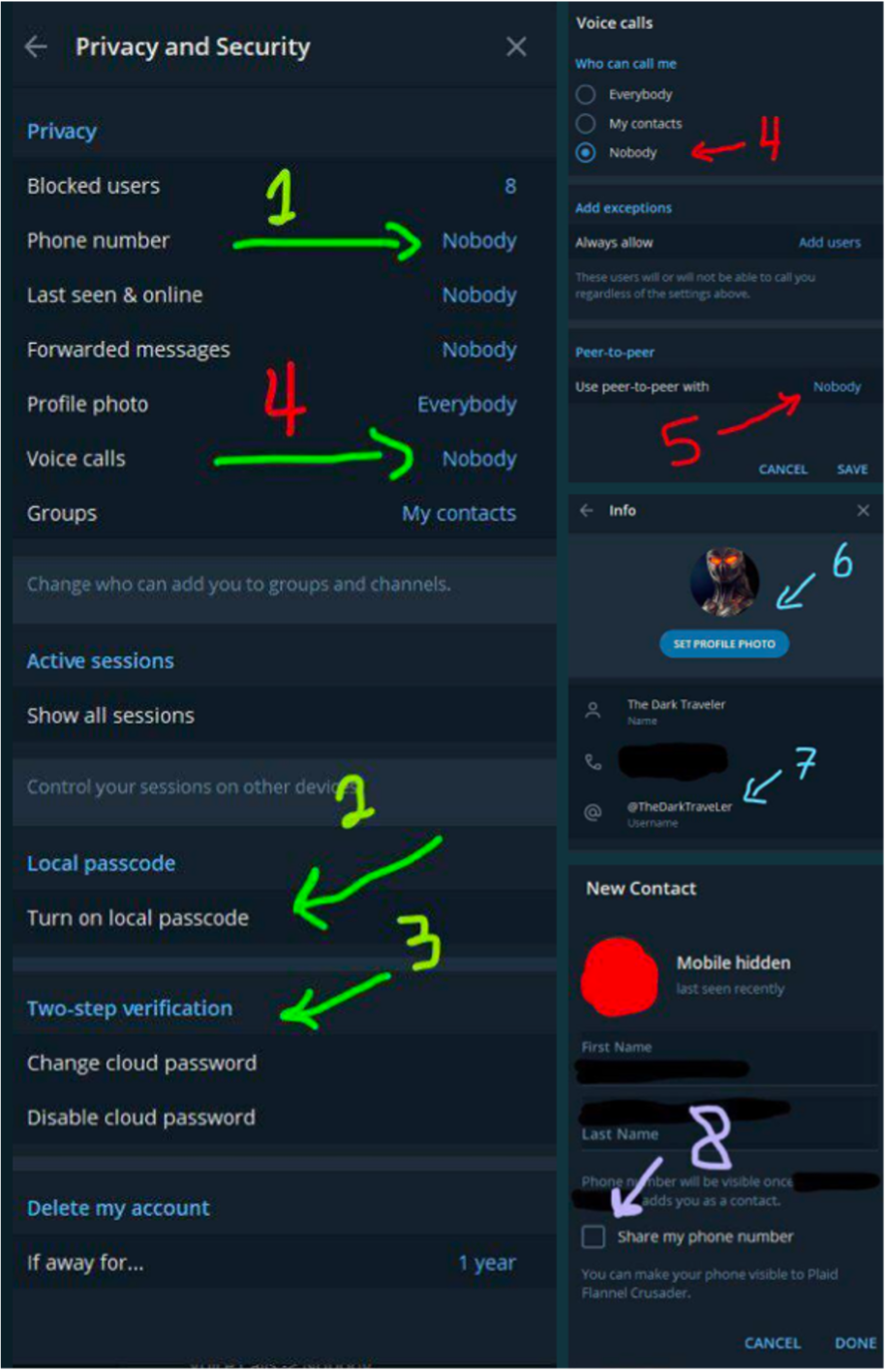

Following the 6 January 2021 Capitol insurrection, the suspension of former President Donald Trump’s Twitter, and the Amazon/Apple/Google removal of Parler, a lesser-known app called MeWe and Telegram experienced a surge in downloads from the Apple Store. In anticipation of new users, extreme Right and white supremacist Telegram spaces circulated op-sec guides with screenshot instructions on how to switch to the most secure settings. They also encouraged users to create accounts with fake numbers, avoid using real profile photos, and exercise caution in chats. In late 2018, I had encountered what appeared to be surprisingly frequent instances of people using selfies as profile photos and having phone numbers displayed on their account while observing these Telegram spaces. Now, however, I rarely see this and although these observations are anecdotal, privacy and security are clearly priorities for these communities.

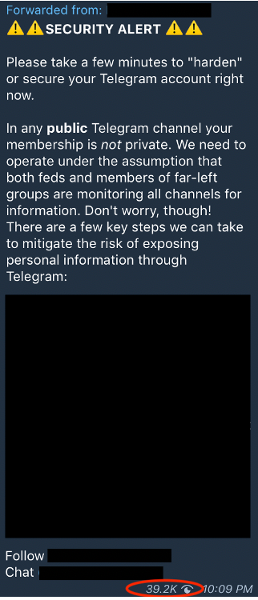

As demonstrated below, security guidance does not need to reach the level of sophistication employed by pro-IS networks to be instructive. Note the 39.2K view count for the “Security Alert” message which received wide circulation:

As in the case of pro-IS supporters, extreme Right and white supremacist accounts will also cross-post links from a variety of platforms, attempt to establish themselves on multiple apps, and express distrust of apps that they view as unsecure or unstable due to policy guidelines and data storage. This topic merits further discussion and examination but this quick overview of some of the tactics hopefully provides an introductory overview.

Conclusion

Regardless of ideology, extremists’ ability to, and effectiveness in, maintaining an online presence relies on certain levels of flexibility and creativity. Factors such as the presence or lack of an official terrorist group designation, individual platform policies/deplatforming efforts, desires for anonymity, and users’ own levels of direct engagement in these spaces (among other factors) influence online behavioural patterns. Extremists coordinate across platforms to maintain webs of complex ecosystems and they even create spaces where exchanges occur across ideological boundaries. Researchers, practitioners, and policymakers must continue their wide-net observational approaches to note rhetorical, narrative, and platform migration shifts developing from within extremist milieus. Finally, proposed key takeaways include:

- Different platforms often hold distinct separate appeals for extremists for a variety of reasons including encryption capabilities, levels of content moderation, user-friendliness, and file sharing capabilities.

- Open vs. closed online ecosystems each contain their strengths and weaknesses which extremists must take into account.

- Cross-platform link-sharing and platform migration patterns can represent shifting phenomenon giving further insight into push/pull factors raised in the first point.

- Virtual extremist ecosystems are characterised by interconnectivity and cross-pollination of content from one platform to the next.

- Users employ layered security measures (ex: accessing websites via TOR).

- Maintaining an online presence is viewed as a victory.

- Sub-ideologies within a general extremist ideological umbrella may maintain their own distinct niche spaces.

- Advice such as online security tips and branching out onto new platforms can occur at decentralised organic levels while in other cases, it may come from more centralised nodes (collectives with unofficial media ‘brands’ like the Electronic Horizons Foundation, prominent accounts recognised in the community, reposts of general messages/graphics or informal conversations among anonymous users).

The nature of decentralised extremist ecosystems allows for fringe development of cross-ideological zones that will allow white supremacists and Salafi-jihadists to exist in shared spaces for example.